From c30cdd95573ec2533dade97425264a2d48cb7fcb Mon Sep 17 00:00:00 2001

From: lichen <770918727@qq.com>

Date: Fri, 8 Apr 2022 19:48:05 +0800

Subject: [PATCH] update

---

README.md | 154 ------------------------------------------------------

1 file changed, 154 deletions(-)

delete mode 100644 README.md

diff --git a/README.md b/README.md

deleted file mode 100644

index 6e3f387..0000000

--- a/README.md

+++ /dev/null

@@ -1,154 +0,0 @@

-

- -

-

-

-

-

- -

-This repository represents Ultralytics open-source research into future object detection methods, and incorporates lessons learned and best practices evolved over thousands of hours of training and evolution on anonymized client datasets. **All code and models are under active development, and are subject to modification or deletion without notice.** Use at your own risk.

-

-

-

-This repository represents Ultralytics open-source research into future object detection methods, and incorporates lessons learned and best practices evolved over thousands of hours of training and evolution on anonymized client datasets. **All code and models are under active development, and are subject to modification or deletion without notice.** Use at your own risk.

-

- ** GPU Speed measures end-to-end time per image averaged over 5000 COCO val2017 images using a V100 GPU with batch size 32, and includes image preprocessing, PyTorch FP16 inference, postprocessing and NMS. EfficientDet data from [google/automl](https://github.com/google/automl) at batch size 8.

-

-- **January 5, 2021**: [v4.0 release](https://github.com/ultralytics/yolov5/releases/tag/v4.0): nn.SiLU() activations, [Weights & Biases](https://wandb.ai/site?utm_campaign=repo_yolo_readme) logging, [PyTorch Hub](https://pytorch.org/hub/ultralytics_yolov5/) integration.

-- **August 13, 2020**: [v3.0 release](https://github.com/ultralytics/yolov5/releases/tag/v3.0): nn.Hardswish() activations, data autodownload, native AMP.

-- **July 23, 2020**: [v2.0 release](https://github.com/ultralytics/yolov5/releases/tag/v2.0): improved model definition, training and mAP.

-- **June 22, 2020**: [PANet](https://arxiv.org/abs/1803.01534) updates: new heads, reduced parameters, improved speed and mAP [364fcfd](https://github.com/ultralytics/yolov5/commit/364fcfd7dba53f46edd4f04c037a039c0a287972).

-- **June 19, 2020**: [FP16](https://pytorch.org/docs/stable/nn.html#torch.nn.Module.half) as new default for smaller checkpoints and faster inference [d4c6674](https://github.com/ultralytics/yolov5/commit/d4c6674c98e19df4c40e33a777610a18d1961145).

-

-

-## Pretrained Checkpoints

-

-| Model | size | APval | APtest | AP50 | SpeedV100 | FPSV100 || params | GFLOPS |

-|---------- |------ |------ |------ |------ | -------- | ------| ------ |------ | :------: |

-| [YOLOv5s](https://github.com/ultralytics/yolov5/releases) |640 |36.8 |36.8 |55.6 |**2.2ms** |**455** ||7.3M |17.0

-| [YOLOv5m](https://github.com/ultralytics/yolov5/releases) |640 |44.5 |44.5 |63.1 |2.9ms |345 ||21.4M |51.3

-| [YOLOv5l](https://github.com/ultralytics/yolov5/releases) |640 |48.1 |48.1 |66.4 |3.8ms |264 ||47.0M |115.4

-| [YOLOv5x](https://github.com/ultralytics/yolov5/releases) |640 |**50.1** |**50.1** |**68.7** |6.0ms |167 ||87.7M |218.8

-| | | | | | | || |

-| [YOLOv5x](https://github.com/ultralytics/yolov5/releases) + TTA |832 |**51.9** |**51.9** |**69.6** |24.9ms |40 ||87.7M |1005.3

-

-

-

-** APtest denotes COCO [test-dev2017](http://cocodataset.org/#upload) server results, all other AP results denote val2017 accuracy.

-** All AP numbers are for single-model single-scale without ensemble or TTA. **Reproduce mAP** by `python test.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65`

-** SpeedGPU averaged over 5000 COCO val2017 images using a GCP [n1-standard-16](https://cloud.google.com/compute/docs/machine-types#n1_standard_machine_types) V100 instance, and includes image preprocessing, FP16 inference, postprocessing and NMS. NMS is 1-2ms/img. **Reproduce speed** by `python test.py --data coco.yaml --img 640 --conf 0.25 --iou 0.45`

-** All checkpoints are trained to 300 epochs with default settings and hyperparameters (no autoaugmentation).

-** Test Time Augmentation ([TTA](https://github.com/ultralytics/yolov5/issues/303)) runs at 3 image sizes. **Reproduce TTA** by `python test.py --data coco.yaml --img 832 --iou 0.65 --augment`

-

-

-## Requirements

-

-Python 3.8 or later with all [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt) dependencies installed, including `torch>=1.7`. To install run:

-```bash

-$ pip install -r requirements.txt

-```

-

-

-## Tutorials

-

-* [Train Custom Data](https://github.com/ultralytics/yolov5/wiki/Train-Custom-Data) 🚀 RECOMMENDED

-* [Tips for Best Training Results](https://github.com/ultralytics/yolov5/wiki/Tips-for-Best-Training-Results) ☘️ RECOMMENDED

-* [Weights & Biases Logging](https://github.com/ultralytics/yolov5/issues/1289) 🌟 NEW

-* [Supervisely Ecosystem](https://github.com/ultralytics/yolov5/issues/2518) 🌟 NEW

-* [Multi-GPU Training](https://github.com/ultralytics/yolov5/issues/475)

-* [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) ⭐ NEW

-* [ONNX and TorchScript Export](https://github.com/ultralytics/yolov5/issues/251)

-* [Test-Time Augmentation (TTA)](https://github.com/ultralytics/yolov5/issues/303)

-* [Model Ensembling](https://github.com/ultralytics/yolov5/issues/318)

-* [Model Pruning/Sparsity](https://github.com/ultralytics/yolov5/issues/304)

-* [Hyperparameter Evolution](https://github.com/ultralytics/yolov5/issues/607)

-* [Transfer Learning with Frozen Layers](https://github.com/ultralytics/yolov5/issues/1314) ⭐ NEW

-* [TensorRT Deployment](https://github.com/wang-xinyu/tensorrtx)

-

-

-## Environments

-

-YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including [CUDA](https://developer.nvidia.com/cuda)/[CUDNN](https://developer.nvidia.com/cudnn), [Python](https://www.python.org/) and [PyTorch](https://pytorch.org/) preinstalled):

-

-- **Google Colab and Kaggle** notebooks with free GPU:

** GPU Speed measures end-to-end time per image averaged over 5000 COCO val2017 images using a V100 GPU with batch size 32, and includes image preprocessing, PyTorch FP16 inference, postprocessing and NMS. EfficientDet data from [google/automl](https://github.com/google/automl) at batch size 8.

-

-- **January 5, 2021**: [v4.0 release](https://github.com/ultralytics/yolov5/releases/tag/v4.0): nn.SiLU() activations, [Weights & Biases](https://wandb.ai/site?utm_campaign=repo_yolo_readme) logging, [PyTorch Hub](https://pytorch.org/hub/ultralytics_yolov5/) integration.

-- **August 13, 2020**: [v3.0 release](https://github.com/ultralytics/yolov5/releases/tag/v3.0): nn.Hardswish() activations, data autodownload, native AMP.

-- **July 23, 2020**: [v2.0 release](https://github.com/ultralytics/yolov5/releases/tag/v2.0): improved model definition, training and mAP.

-- **June 22, 2020**: [PANet](https://arxiv.org/abs/1803.01534) updates: new heads, reduced parameters, improved speed and mAP [364fcfd](https://github.com/ultralytics/yolov5/commit/364fcfd7dba53f46edd4f04c037a039c0a287972).

-- **June 19, 2020**: [FP16](https://pytorch.org/docs/stable/nn.html#torch.nn.Module.half) as new default for smaller checkpoints and faster inference [d4c6674](https://github.com/ultralytics/yolov5/commit/d4c6674c98e19df4c40e33a777610a18d1961145).

-

-

-## Pretrained Checkpoints

-

-| Model | size | APval | APtest | AP50 | SpeedV100 | FPSV100 || params | GFLOPS |

-|---------- |------ |------ |------ |------ | -------- | ------| ------ |------ | :------: |

-| [YOLOv5s](https://github.com/ultralytics/yolov5/releases) |640 |36.8 |36.8 |55.6 |**2.2ms** |**455** ||7.3M |17.0

-| [YOLOv5m](https://github.com/ultralytics/yolov5/releases) |640 |44.5 |44.5 |63.1 |2.9ms |345 ||21.4M |51.3

-| [YOLOv5l](https://github.com/ultralytics/yolov5/releases) |640 |48.1 |48.1 |66.4 |3.8ms |264 ||47.0M |115.4

-| [YOLOv5x](https://github.com/ultralytics/yolov5/releases) |640 |**50.1** |**50.1** |**68.7** |6.0ms |167 ||87.7M |218.8

-| | | | | | | || |

-| [YOLOv5x](https://github.com/ultralytics/yolov5/releases) + TTA |832 |**51.9** |**51.9** |**69.6** |24.9ms |40 ||87.7M |1005.3

-

-

-

-** APtest denotes COCO [test-dev2017](http://cocodataset.org/#upload) server results, all other AP results denote val2017 accuracy.

-** All AP numbers are for single-model single-scale without ensemble or TTA. **Reproduce mAP** by `python test.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65`

-** SpeedGPU averaged over 5000 COCO val2017 images using a GCP [n1-standard-16](https://cloud.google.com/compute/docs/machine-types#n1_standard_machine_types) V100 instance, and includes image preprocessing, FP16 inference, postprocessing and NMS. NMS is 1-2ms/img. **Reproduce speed** by `python test.py --data coco.yaml --img 640 --conf 0.25 --iou 0.45`

-** All checkpoints are trained to 300 epochs with default settings and hyperparameters (no autoaugmentation).

-** Test Time Augmentation ([TTA](https://github.com/ultralytics/yolov5/issues/303)) runs at 3 image sizes. **Reproduce TTA** by `python test.py --data coco.yaml --img 832 --iou 0.65 --augment`

-

-

-## Requirements

-

-Python 3.8 or later with all [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt) dependencies installed, including `torch>=1.7`. To install run:

-```bash

-$ pip install -r requirements.txt

-```

-

-

-## Tutorials

-

-* [Train Custom Data](https://github.com/ultralytics/yolov5/wiki/Train-Custom-Data) 🚀 RECOMMENDED

-* [Tips for Best Training Results](https://github.com/ultralytics/yolov5/wiki/Tips-for-Best-Training-Results) ☘️ RECOMMENDED

-* [Weights & Biases Logging](https://github.com/ultralytics/yolov5/issues/1289) 🌟 NEW

-* [Supervisely Ecosystem](https://github.com/ultralytics/yolov5/issues/2518) 🌟 NEW

-* [Multi-GPU Training](https://github.com/ultralytics/yolov5/issues/475)

-* [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) ⭐ NEW

-* [ONNX and TorchScript Export](https://github.com/ultralytics/yolov5/issues/251)

-* [Test-Time Augmentation (TTA)](https://github.com/ultralytics/yolov5/issues/303)

-* [Model Ensembling](https://github.com/ultralytics/yolov5/issues/318)

-* [Model Pruning/Sparsity](https://github.com/ultralytics/yolov5/issues/304)

-* [Hyperparameter Evolution](https://github.com/ultralytics/yolov5/issues/607)

-* [Transfer Learning with Frozen Layers](https://github.com/ultralytics/yolov5/issues/1314) ⭐ NEW

-* [TensorRT Deployment](https://github.com/wang-xinyu/tensorrtx)

-

-

-## Environments

-

-YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including [CUDA](https://developer.nvidia.com/cuda)/[CUDNN](https://developer.nvidia.com/cudnn), [Python](https://www.python.org/) and [PyTorch](https://pytorch.org/) preinstalled):

-

-- **Google Colab and Kaggle** notebooks with free GPU:

-- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/GCP-Quickstart)

-- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/AWS-Quickstart)

-- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/Docker-Quickstart)

-- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/GCP-Quickstart)

-- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/AWS-Quickstart)

-- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/Docker-Quickstart)  -

-

-## Inference

-

-detect.py runs inference on a variety of sources, downloading models automatically from the [latest YOLOv5 release](https://github.com/ultralytics/yolov5/releases) and saving results to `runs/detect`.

-```bash

-$ python detect.py --source 0 # webcam

- file.jpg # image

- file.mp4 # video

- path/ # directory

- path/*.jpg # glob

- rtsp://170.93.143.139/rtplive/470011e600ef003a004ee33696235daa # rtsp stream

- rtmp://192.168.1.105/live/test # rtmp stream

- http://112.50.243.8/PLTV/88888888/224/3221225900/1.m3u8 # http stream

-```

-

-To run inference on example images in `data/images`:

-```bash

-$ python detect.py --source data/images --weights yolov5s.pt --conf 0.25

-

-Namespace(agnostic_nms=False, augment=False, classes=None, conf_thres=0.25, device='', exist_ok=False, img_size=640, iou_thres=0.45, name='exp', project='runs/detect', save_conf=False, save_txt=False, source='data/images/', update=False, view_img=False, weights=['yolov5s.pt'])

-YOLOv5 v4.0-96-g83dc1b4 torch 1.7.0+cu101 CUDA:0 (Tesla V100-SXM2-16GB, 16160.5MB)

-

-Fusing layers...

-Model Summary: 224 layers, 7266973 parameters, 0 gradients, 17.0 GFLOPS

-image 1/2 /content/yolov5/data/images/bus.jpg: 640x480 4 persons, 1 bus, Done. (0.010s)

-image 2/2 /content/yolov5/data/images/zidane.jpg: 384x640 2 persons, 1 tie, Done. (0.011s)

-Results saved to runs/detect/exp2

-Done. (0.103s)

-```

-

-

-

-## Inference

-

-detect.py runs inference on a variety of sources, downloading models automatically from the [latest YOLOv5 release](https://github.com/ultralytics/yolov5/releases) and saving results to `runs/detect`.

-```bash

-$ python detect.py --source 0 # webcam

- file.jpg # image

- file.mp4 # video

- path/ # directory

- path/*.jpg # glob

- rtsp://170.93.143.139/rtplive/470011e600ef003a004ee33696235daa # rtsp stream

- rtmp://192.168.1.105/live/test # rtmp stream

- http://112.50.243.8/PLTV/88888888/224/3221225900/1.m3u8 # http stream

-```

-

-To run inference on example images in `data/images`:

-```bash

-$ python detect.py --source data/images --weights yolov5s.pt --conf 0.25

-

-Namespace(agnostic_nms=False, augment=False, classes=None, conf_thres=0.25, device='', exist_ok=False, img_size=640, iou_thres=0.45, name='exp', project='runs/detect', save_conf=False, save_txt=False, source='data/images/', update=False, view_img=False, weights=['yolov5s.pt'])

-YOLOv5 v4.0-96-g83dc1b4 torch 1.7.0+cu101 CUDA:0 (Tesla V100-SXM2-16GB, 16160.5MB)

-

-Fusing layers...

-Model Summary: 224 layers, 7266973 parameters, 0 gradients, 17.0 GFLOPS

-image 1/2 /content/yolov5/data/images/bus.jpg: 640x480 4 persons, 1 bus, Done. (0.010s)

-image 2/2 /content/yolov5/data/images/zidane.jpg: 384x640 2 persons, 1 tie, Done. (0.011s)

-Results saved to runs/detect/exp2

-Done. (0.103s)

-```

- -

-### PyTorch Hub

-

-To run **batched inference** with YOLOv5 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

-```python

-import torch

-

-# Model

-model = torch.hub.load('ultralytics/yolov5', 'yolov5s')

-

-# Images

-dir = 'https://github.com/ultralytics/yolov5/raw/master/data/images/'

-imgs = [dir + f for f in ('zidane.jpg', 'bus.jpg')] # batch of images

-

-# Inference

-results = model(imgs)

-results.print() # or .show(), .save()

-```

-

-

-## Training

-

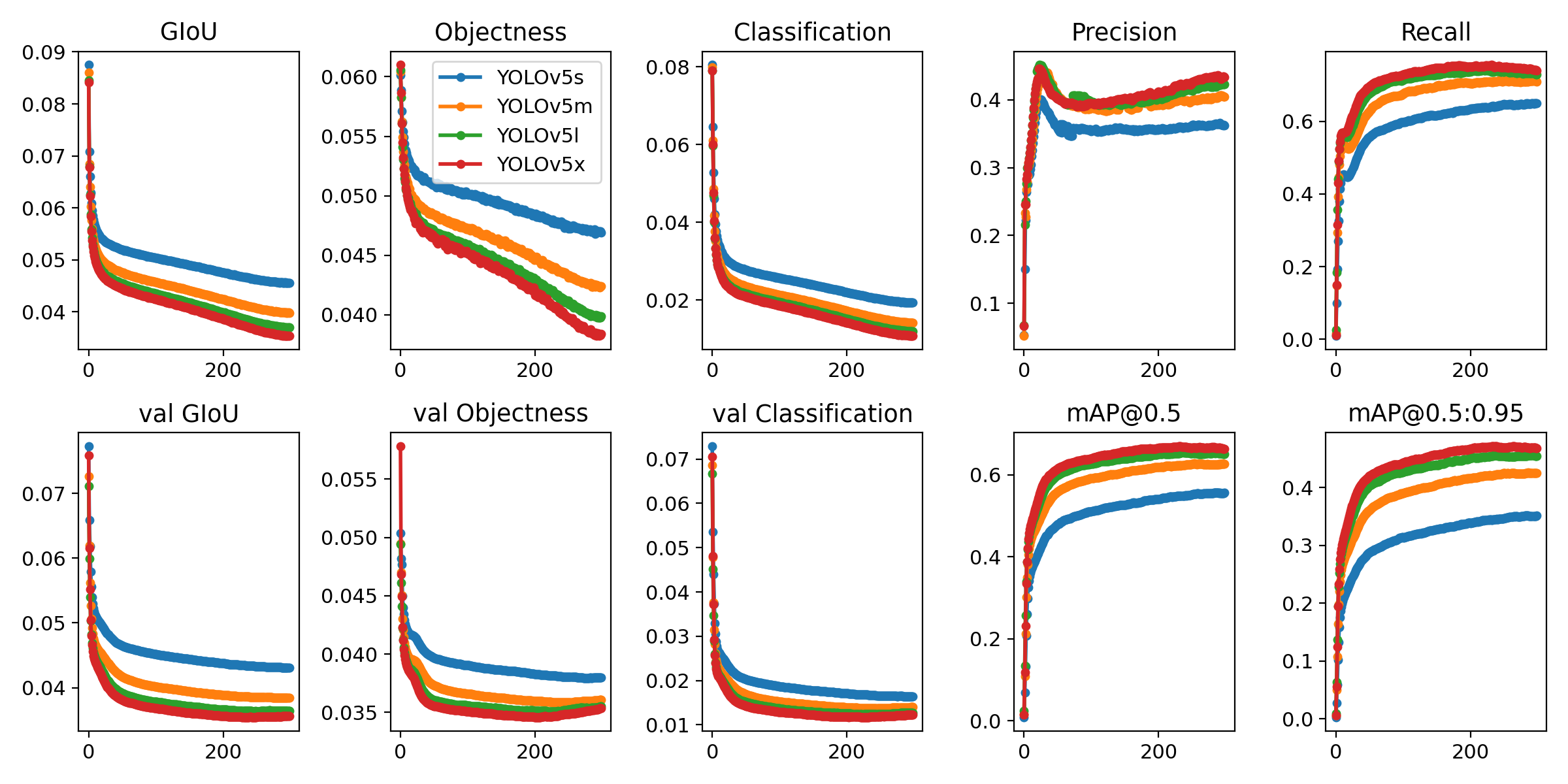

-Run commands below to reproduce results on [COCO](https://github.com/ultralytics/yolov5/blob/master/data/scripts/get_coco.sh) dataset (dataset auto-downloads on first use). Training times for YOLOv5s/m/l/x are 2/4/6/8 days on a single V100 (multi-GPU times faster). Use the largest `--batch-size` your GPU allows (batch sizes shown for 16 GB devices).

-```bash

-$ python train.py --data coco.yaml --cfg yolov5s.yaml --weights '' --batch-size 64

- yolov5m 40

- yolov5l 24

- yolov5x 16

-```

-

-

-### PyTorch Hub

-

-To run **batched inference** with YOLOv5 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

-```python

-import torch

-

-# Model

-model = torch.hub.load('ultralytics/yolov5', 'yolov5s')

-

-# Images

-dir = 'https://github.com/ultralytics/yolov5/raw/master/data/images/'

-imgs = [dir + f for f in ('zidane.jpg', 'bus.jpg')] # batch of images

-

-# Inference

-results = model(imgs)

-results.print() # or .show(), .save()

-```

-

-

-## Training

-

-Run commands below to reproduce results on [COCO](https://github.com/ultralytics/yolov5/blob/master/data/scripts/get_coco.sh) dataset (dataset auto-downloads on first use). Training times for YOLOv5s/m/l/x are 2/4/6/8 days on a single V100 (multi-GPU times faster). Use the largest `--batch-size` your GPU allows (batch sizes shown for 16 GB devices).

-```bash

-$ python train.py --data coco.yaml --cfg yolov5s.yaml --weights '' --batch-size 64

- yolov5m 40

- yolov5l 24

- yolov5x 16

-```

- -

-

-## Citation

-

-[](https://zenodo.org/badge/latestdoi/264818686)

-

-

-## About Us

-

-Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

-- **Cloud-based AI** systems operating on **hundreds of HD video streams in realtime.**

-- **Edge AI** integrated into custom iOS and Android apps for realtime **30 FPS video inference.**

-- **Custom data training**, hyperparameter evolution, and model exportation to any destination.

-

-For business inquiries and professional support requests please visit us at https://www.ultralytics.com.

-

-

-## Contact

-

-**Issues should be raised directly in the repository.** For business inquiries or professional support requests please visit https://www.ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.

-

-

-## Citation

-

-[](https://zenodo.org/badge/latestdoi/264818686)

-

-

-## About Us

-

-Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

-- **Cloud-based AI** systems operating on **hundreds of HD video streams in realtime.**

-- **Edge AI** integrated into custom iOS and Android apps for realtime **30 FPS video inference.**

-- **Custom data training**, hyperparameter evolution, and model exportation to any destination.

-

-For business inquiries and professional support requests please visit us at https://www.ultralytics.com.

-

-

-## Contact

-

-**Issues should be raised directly in the repository.** For business inquiries or professional support requests please visit https://www.ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.

-

-

-

-

-

- ** GPU Speed measures end-to-end time per image averaged over 5000 COCO val2017 images using a V100 GPU with batch size 32, and includes image preprocessing, PyTorch FP16 inference, postprocessing and NMS. EfficientDet data from [google/automl](https://github.com/google/automl) at batch size 8.

-

-- **January 5, 2021**: [v4.0 release](https://github.com/ultralytics/yolov5/releases/tag/v4.0): nn.SiLU() activations, [Weights & Biases](https://wandb.ai/site?utm_campaign=repo_yolo_readme) logging, [PyTorch Hub](https://pytorch.org/hub/ultralytics_yolov5/) integration.

-- **August 13, 2020**: [v3.0 release](https://github.com/ultralytics/yolov5/releases/tag/v3.0): nn.Hardswish() activations, data autodownload, native AMP.

-- **July 23, 2020**: [v2.0 release](https://github.com/ultralytics/yolov5/releases/tag/v2.0): improved model definition, training and mAP.

-- **June 22, 2020**: [PANet](https://arxiv.org/abs/1803.01534) updates: new heads, reduced parameters, improved speed and mAP [364fcfd](https://github.com/ultralytics/yolov5/commit/364fcfd7dba53f46edd4f04c037a039c0a287972).

-- **June 19, 2020**: [FP16](https://pytorch.org/docs/stable/nn.html#torch.nn.Module.half) as new default for smaller checkpoints and faster inference [d4c6674](https://github.com/ultralytics/yolov5/commit/d4c6674c98e19df4c40e33a777610a18d1961145).

-

-

-## Pretrained Checkpoints

-

-| Model | size | APval | APtest | AP50 | SpeedV100 | FPSV100 || params | GFLOPS |

-|---------- |------ |------ |------ |------ | -------- | ------| ------ |------ | :------: |

-| [YOLOv5s](https://github.com/ultralytics/yolov5/releases) |640 |36.8 |36.8 |55.6 |**2.2ms** |**455** ||7.3M |17.0

-| [YOLOv5m](https://github.com/ultralytics/yolov5/releases) |640 |44.5 |44.5 |63.1 |2.9ms |345 ||21.4M |51.3

-| [YOLOv5l](https://github.com/ultralytics/yolov5/releases) |640 |48.1 |48.1 |66.4 |3.8ms |264 ||47.0M |115.4

-| [YOLOv5x](https://github.com/ultralytics/yolov5/releases) |640 |**50.1** |**50.1** |**68.7** |6.0ms |167 ||87.7M |218.8

-| | | | | | | || |

-| [YOLOv5x](https://github.com/ultralytics/yolov5/releases) + TTA |832 |**51.9** |**51.9** |**69.6** |24.9ms |40 ||87.7M |1005.3

-

-

-

-** APtest denotes COCO [test-dev2017](http://cocodataset.org/#upload) server results, all other AP results denote val2017 accuracy.

-** All AP numbers are for single-model single-scale without ensemble or TTA. **Reproduce mAP** by `python test.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65`

-** SpeedGPU averaged over 5000 COCO val2017 images using a GCP [n1-standard-16](https://cloud.google.com/compute/docs/machine-types#n1_standard_machine_types) V100 instance, and includes image preprocessing, FP16 inference, postprocessing and NMS. NMS is 1-2ms/img. **Reproduce speed** by `python test.py --data coco.yaml --img 640 --conf 0.25 --iou 0.45`

-** All checkpoints are trained to 300 epochs with default settings and hyperparameters (no autoaugmentation).

-** Test Time Augmentation ([TTA](https://github.com/ultralytics/yolov5/issues/303)) runs at 3 image sizes. **Reproduce TTA** by `python test.py --data coco.yaml --img 832 --iou 0.65 --augment`

-

-

-## Requirements

-

-Python 3.8 or later with all [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt) dependencies installed, including `torch>=1.7`. To install run:

-```bash

-$ pip install -r requirements.txt

-```

-

-

-## Tutorials

-

-* [Train Custom Data](https://github.com/ultralytics/yolov5/wiki/Train-Custom-Data) 🚀 RECOMMENDED

-* [Tips for Best Training Results](https://github.com/ultralytics/yolov5/wiki/Tips-for-Best-Training-Results) ☘️ RECOMMENDED

-* [Weights & Biases Logging](https://github.com/ultralytics/yolov5/issues/1289) 🌟 NEW

-* [Supervisely Ecosystem](https://github.com/ultralytics/yolov5/issues/2518) 🌟 NEW

-* [Multi-GPU Training](https://github.com/ultralytics/yolov5/issues/475)

-* [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) ⭐ NEW

-* [ONNX and TorchScript Export](https://github.com/ultralytics/yolov5/issues/251)

-* [Test-Time Augmentation (TTA)](https://github.com/ultralytics/yolov5/issues/303)

-* [Model Ensembling](https://github.com/ultralytics/yolov5/issues/318)

-* [Model Pruning/Sparsity](https://github.com/ultralytics/yolov5/issues/304)

-* [Hyperparameter Evolution](https://github.com/ultralytics/yolov5/issues/607)

-* [Transfer Learning with Frozen Layers](https://github.com/ultralytics/yolov5/issues/1314) ⭐ NEW

-* [TensorRT Deployment](https://github.com/wang-xinyu/tensorrtx)

-

-

-## Environments

-

-YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including [CUDA](https://developer.nvidia.com/cuda)/[CUDNN](https://developer.nvidia.com/cudnn), [Python](https://www.python.org/) and [PyTorch](https://pytorch.org/) preinstalled):

-

-- **Google Colab and Kaggle** notebooks with free GPU:

** GPU Speed measures end-to-end time per image averaged over 5000 COCO val2017 images using a V100 GPU with batch size 32, and includes image preprocessing, PyTorch FP16 inference, postprocessing and NMS. EfficientDet data from [google/automl](https://github.com/google/automl) at batch size 8.

-

-- **January 5, 2021**: [v4.0 release](https://github.com/ultralytics/yolov5/releases/tag/v4.0): nn.SiLU() activations, [Weights & Biases](https://wandb.ai/site?utm_campaign=repo_yolo_readme) logging, [PyTorch Hub](https://pytorch.org/hub/ultralytics_yolov5/) integration.

-- **August 13, 2020**: [v3.0 release](https://github.com/ultralytics/yolov5/releases/tag/v3.0): nn.Hardswish() activations, data autodownload, native AMP.

-- **July 23, 2020**: [v2.0 release](https://github.com/ultralytics/yolov5/releases/tag/v2.0): improved model definition, training and mAP.

-- **June 22, 2020**: [PANet](https://arxiv.org/abs/1803.01534) updates: new heads, reduced parameters, improved speed and mAP [364fcfd](https://github.com/ultralytics/yolov5/commit/364fcfd7dba53f46edd4f04c037a039c0a287972).

-- **June 19, 2020**: [FP16](https://pytorch.org/docs/stable/nn.html#torch.nn.Module.half) as new default for smaller checkpoints and faster inference [d4c6674](https://github.com/ultralytics/yolov5/commit/d4c6674c98e19df4c40e33a777610a18d1961145).

-

-

-## Pretrained Checkpoints

-

-| Model | size | APval | APtest | AP50 | SpeedV100 | FPSV100 || params | GFLOPS |

-|---------- |------ |------ |------ |------ | -------- | ------| ------ |------ | :------: |

-| [YOLOv5s](https://github.com/ultralytics/yolov5/releases) |640 |36.8 |36.8 |55.6 |**2.2ms** |**455** ||7.3M |17.0

-| [YOLOv5m](https://github.com/ultralytics/yolov5/releases) |640 |44.5 |44.5 |63.1 |2.9ms |345 ||21.4M |51.3

-| [YOLOv5l](https://github.com/ultralytics/yolov5/releases) |640 |48.1 |48.1 |66.4 |3.8ms |264 ||47.0M |115.4

-| [YOLOv5x](https://github.com/ultralytics/yolov5/releases) |640 |**50.1** |**50.1** |**68.7** |6.0ms |167 ||87.7M |218.8

-| | | | | | | || |

-| [YOLOv5x](https://github.com/ultralytics/yolov5/releases) + TTA |832 |**51.9** |**51.9** |**69.6** |24.9ms |40 ||87.7M |1005.3

-

-

-

-** APtest denotes COCO [test-dev2017](http://cocodataset.org/#upload) server results, all other AP results denote val2017 accuracy.

-** All AP numbers are for single-model single-scale without ensemble or TTA. **Reproduce mAP** by `python test.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65`

-** SpeedGPU averaged over 5000 COCO val2017 images using a GCP [n1-standard-16](https://cloud.google.com/compute/docs/machine-types#n1_standard_machine_types) V100 instance, and includes image preprocessing, FP16 inference, postprocessing and NMS. NMS is 1-2ms/img. **Reproduce speed** by `python test.py --data coco.yaml --img 640 --conf 0.25 --iou 0.45`

-** All checkpoints are trained to 300 epochs with default settings and hyperparameters (no autoaugmentation).

-** Test Time Augmentation ([TTA](https://github.com/ultralytics/yolov5/issues/303)) runs at 3 image sizes. **Reproduce TTA** by `python test.py --data coco.yaml --img 832 --iou 0.65 --augment`

-

-

-## Requirements

-

-Python 3.8 or later with all [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt) dependencies installed, including `torch>=1.7`. To install run:

-```bash

-$ pip install -r requirements.txt

-```

-

-

-## Tutorials

-

-* [Train Custom Data](https://github.com/ultralytics/yolov5/wiki/Train-Custom-Data) 🚀 RECOMMENDED

-* [Tips for Best Training Results](https://github.com/ultralytics/yolov5/wiki/Tips-for-Best-Training-Results) ☘️ RECOMMENDED

-* [Weights & Biases Logging](https://github.com/ultralytics/yolov5/issues/1289) 🌟 NEW

-* [Supervisely Ecosystem](https://github.com/ultralytics/yolov5/issues/2518) 🌟 NEW

-* [Multi-GPU Training](https://github.com/ultralytics/yolov5/issues/475)

-* [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) ⭐ NEW

-* [ONNX and TorchScript Export](https://github.com/ultralytics/yolov5/issues/251)

-* [Test-Time Augmentation (TTA)](https://github.com/ultralytics/yolov5/issues/303)

-* [Model Ensembling](https://github.com/ultralytics/yolov5/issues/318)

-* [Model Pruning/Sparsity](https://github.com/ultralytics/yolov5/issues/304)

-* [Hyperparameter Evolution](https://github.com/ultralytics/yolov5/issues/607)

-* [Transfer Learning with Frozen Layers](https://github.com/ultralytics/yolov5/issues/1314) ⭐ NEW

-* [TensorRT Deployment](https://github.com/wang-xinyu/tensorrtx)

-

-

-## Environments

-

-YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including [CUDA](https://developer.nvidia.com/cuda)/[CUDNN](https://developer.nvidia.com/cudnn), [Python](https://www.python.org/) and [PyTorch](https://pytorch.org/) preinstalled):

-

-- **Google Colab and Kaggle** notebooks with free GPU:  -- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/GCP-Quickstart)

-- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/AWS-Quickstart)

-- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/Docker-Quickstart)

-- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/GCP-Quickstart)

-- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/AWS-Quickstart)

-- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/Docker-Quickstart)  -

-

-## Inference

-

-detect.py runs inference on a variety of sources, downloading models automatically from the [latest YOLOv5 release](https://github.com/ultralytics/yolov5/releases) and saving results to `runs/detect`.

-```bash

-$ python detect.py --source 0 # webcam

- file.jpg # image

- file.mp4 # video

- path/ # directory

- path/*.jpg # glob

- rtsp://170.93.143.139/rtplive/470011e600ef003a004ee33696235daa # rtsp stream

- rtmp://192.168.1.105/live/test # rtmp stream

- http://112.50.243.8/PLTV/88888888/224/3221225900/1.m3u8 # http stream

-```

-

-To run inference on example images in `data/images`:

-```bash

-$ python detect.py --source data/images --weights yolov5s.pt --conf 0.25

-

-Namespace(agnostic_nms=False, augment=False, classes=None, conf_thres=0.25, device='', exist_ok=False, img_size=640, iou_thres=0.45, name='exp', project='runs/detect', save_conf=False, save_txt=False, source='data/images/', update=False, view_img=False, weights=['yolov5s.pt'])

-YOLOv5 v4.0-96-g83dc1b4 torch 1.7.0+cu101 CUDA:0 (Tesla V100-SXM2-16GB, 16160.5MB)

-

-Fusing layers...

-Model Summary: 224 layers, 7266973 parameters, 0 gradients, 17.0 GFLOPS

-image 1/2 /content/yolov5/data/images/bus.jpg: 640x480 4 persons, 1 bus, Done. (0.010s)

-image 2/2 /content/yolov5/data/images/zidane.jpg: 384x640 2 persons, 1 tie, Done. (0.011s)

-Results saved to runs/detect/exp2

-Done. (0.103s)

-```

-

-

-

-## Inference

-

-detect.py runs inference on a variety of sources, downloading models automatically from the [latest YOLOv5 release](https://github.com/ultralytics/yolov5/releases) and saving results to `runs/detect`.

-```bash

-$ python detect.py --source 0 # webcam

- file.jpg # image

- file.mp4 # video

- path/ # directory

- path/*.jpg # glob

- rtsp://170.93.143.139/rtplive/470011e600ef003a004ee33696235daa # rtsp stream

- rtmp://192.168.1.105/live/test # rtmp stream

- http://112.50.243.8/PLTV/88888888/224/3221225900/1.m3u8 # http stream

-```

-

-To run inference on example images in `data/images`:

-```bash

-$ python detect.py --source data/images --weights yolov5s.pt --conf 0.25

-

-Namespace(agnostic_nms=False, augment=False, classes=None, conf_thres=0.25, device='', exist_ok=False, img_size=640, iou_thres=0.45, name='exp', project='runs/detect', save_conf=False, save_txt=False, source='data/images/', update=False, view_img=False, weights=['yolov5s.pt'])

-YOLOv5 v4.0-96-g83dc1b4 torch 1.7.0+cu101 CUDA:0 (Tesla V100-SXM2-16GB, 16160.5MB)

-

-Fusing layers...

-Model Summary: 224 layers, 7266973 parameters, 0 gradients, 17.0 GFLOPS

-image 1/2 /content/yolov5/data/images/bus.jpg: 640x480 4 persons, 1 bus, Done. (0.010s)

-image 2/2 /content/yolov5/data/images/zidane.jpg: 384x640 2 persons, 1 tie, Done. (0.011s)

-Results saved to runs/detect/exp2

-Done. (0.103s)

-```

- -

-### PyTorch Hub

-

-To run **batched inference** with YOLOv5 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

-```python

-import torch

-

-# Model

-model = torch.hub.load('ultralytics/yolov5', 'yolov5s')

-

-# Images

-dir = 'https://github.com/ultralytics/yolov5/raw/master/data/images/'

-imgs = [dir + f for f in ('zidane.jpg', 'bus.jpg')] # batch of images

-

-# Inference

-results = model(imgs)

-results.print() # or .show(), .save()

-```

-

-

-## Training

-

-Run commands below to reproduce results on [COCO](https://github.com/ultralytics/yolov5/blob/master/data/scripts/get_coco.sh) dataset (dataset auto-downloads on first use). Training times for YOLOv5s/m/l/x are 2/4/6/8 days on a single V100 (multi-GPU times faster). Use the largest `--batch-size` your GPU allows (batch sizes shown for 16 GB devices).

-```bash

-$ python train.py --data coco.yaml --cfg yolov5s.yaml --weights '' --batch-size 64

- yolov5m 40

- yolov5l 24

- yolov5x 16

-```

-

-

-### PyTorch Hub

-

-To run **batched inference** with YOLOv5 and [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36):

-```python

-import torch

-

-# Model

-model = torch.hub.load('ultralytics/yolov5', 'yolov5s')

-

-# Images

-dir = 'https://github.com/ultralytics/yolov5/raw/master/data/images/'

-imgs = [dir + f for f in ('zidane.jpg', 'bus.jpg')] # batch of images

-

-# Inference

-results = model(imgs)

-results.print() # or .show(), .save()

-```

-

-

-## Training

-

-Run commands below to reproduce results on [COCO](https://github.com/ultralytics/yolov5/blob/master/data/scripts/get_coco.sh) dataset (dataset auto-downloads on first use). Training times for YOLOv5s/m/l/x are 2/4/6/8 days on a single V100 (multi-GPU times faster). Use the largest `--batch-size` your GPU allows (batch sizes shown for 16 GB devices).

-```bash

-$ python train.py --data coco.yaml --cfg yolov5s.yaml --weights '' --batch-size 64

- yolov5m 40

- yolov5l 24

- yolov5x 16

-```

- -

-

-## Citation

-

-[](https://zenodo.org/badge/latestdoi/264818686)

-

-

-## About Us

-

-Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

-- **Cloud-based AI** systems operating on **hundreds of HD video streams in realtime.**

-- **Edge AI** integrated into custom iOS and Android apps for realtime **30 FPS video inference.**

-- **Custom data training**, hyperparameter evolution, and model exportation to any destination.

-

-For business inquiries and professional support requests please visit us at https://www.ultralytics.com.

-

-

-## Contact

-

-**Issues should be raised directly in the repository.** For business inquiries or professional support requests please visit https://www.ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.

-

-

-## Citation

-

-[](https://zenodo.org/badge/latestdoi/264818686)

-

-

-## About Us

-

-Ultralytics is a U.S.-based particle physics and AI startup with over 6 years of expertise supporting government, academic and business clients. We offer a wide range of vision AI services, spanning from simple expert advice up to delivery of fully customized, end-to-end production solutions, including:

-- **Cloud-based AI** systems operating on **hundreds of HD video streams in realtime.**

-- **Edge AI** integrated into custom iOS and Android apps for realtime **30 FPS video inference.**

-- **Custom data training**, hyperparameter evolution, and model exportation to any destination.

-

-For business inquiries and professional support requests please visit us at https://www.ultralytics.com.

-

-

-## Contact

-

-**Issues should be raised directly in the repository.** For business inquiries or professional support requests please visit https://www.ultralytics.com or email Glenn Jocher at glenn.jocher@ultralytics.com.