mirror of

https://gitee.com/nanjing-yimao-information/ieemoo-ai-gift.git

synced 2025-08-23 07:30:25 +00:00

update

This commit is contained in:

203

docs/en/models/fast-sam.md

Normal file

203

docs/en/models/fast-sam.md

Normal file

@ -0,0 +1,203 @@

|

||||

---

|

||||

comments: true

|

||||

description: Explore FastSAM, a CNN-based solution for real-time object segmentation in images. Enhanced user interaction, computational efficiency and adaptable across vision tasks.

|

||||

keywords: FastSAM, machine learning, CNN-based solution, object segmentation, real-time solution, Ultralytics, vision tasks, image processing, industrial applications, user interaction

|

||||

---

|

||||

|

||||

# Fast Segment Anything Model (FastSAM)

|

||||

|

||||

The Fast Segment Anything Model (FastSAM) is a novel, real-time CNN-based solution for the Segment Anything task. This task is designed to segment any object within an image based on various possible user interaction prompts. FastSAM significantly reduces computational demands while maintaining competitive performance, making it a practical choice for a variety of vision tasks.

|

||||

|

||||

|

||||

|

||||

## Overview

|

||||

|

||||

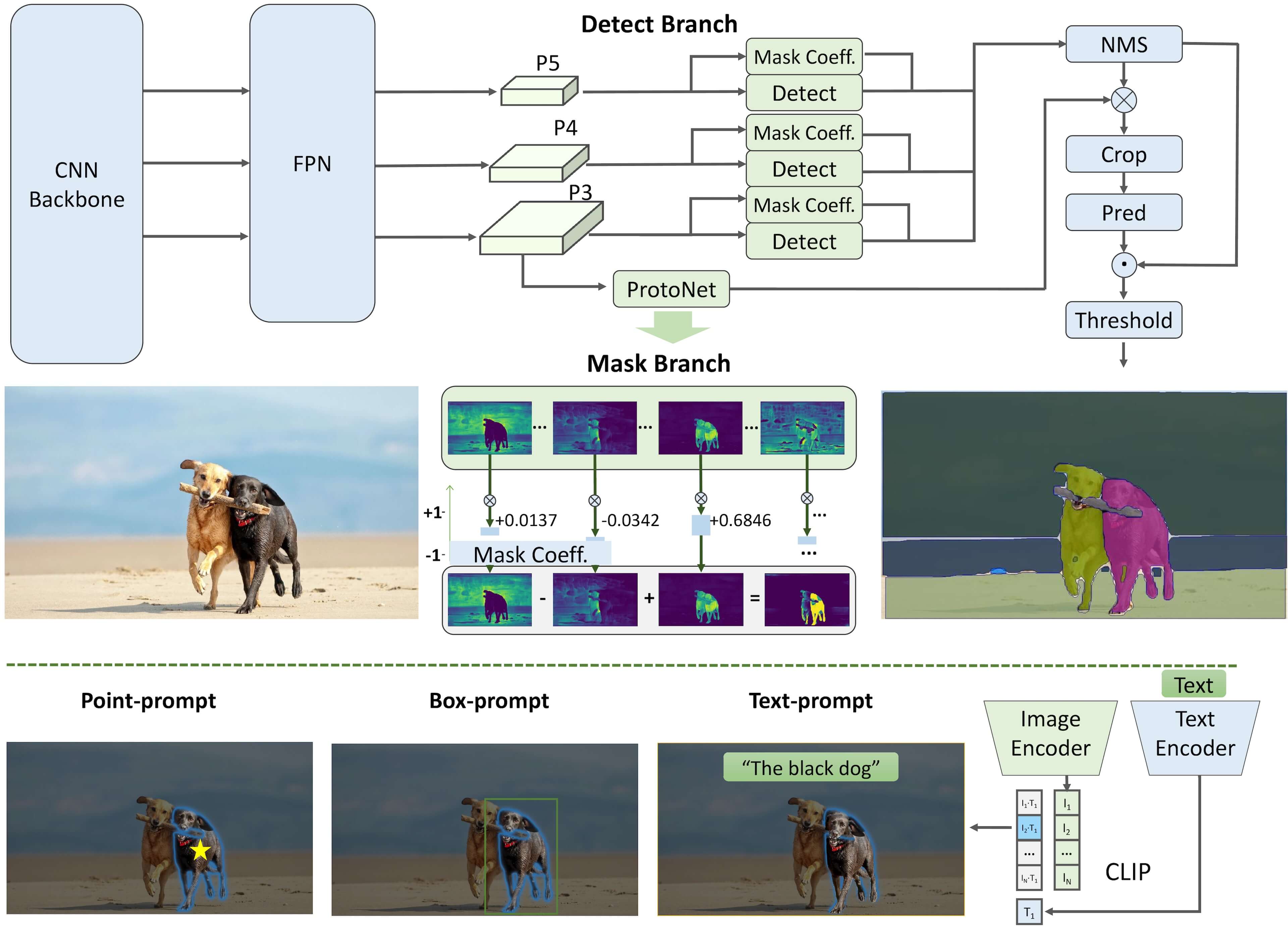

FastSAM is designed to address the limitations of the [Segment Anything Model (SAM)](sam.md), a heavy Transformer model with substantial computational resource requirements. The FastSAM decouples the segment anything task into two sequential stages: all-instance segmentation and prompt-guided selection. The first stage uses [YOLOv8-seg](../tasks/segment.md) to produce the segmentation masks of all instances in the image. In the second stage, it outputs the region-of-interest corresponding to the prompt.

|

||||

|

||||

## Key Features

|

||||

|

||||

1. **Real-time Solution:** By leveraging the computational efficiency of CNNs, FastSAM provides a real-time solution for the segment anything task, making it valuable for industrial applications that require quick results.

|

||||

|

||||

2. **Efficiency and Performance:** FastSAM offers a significant reduction in computational and resource demands without compromising on performance quality. It achieves comparable performance to SAM but with drastically reduced computational resources, enabling real-time application.

|

||||

|

||||

3. **Prompt-guided Segmentation:** FastSAM can segment any object within an image guided by various possible user interaction prompts, providing flexibility and adaptability in different scenarios.

|

||||

|

||||

4. **Based on YOLOv8-seg:** FastSAM is based on [YOLOv8-seg](../tasks/segment.md), an object detector equipped with an instance segmentation branch. This allows it to effectively produce the segmentation masks of all instances in an image.

|

||||

|

||||

5. **Competitive Results on Benchmarks:** On the object proposal task on MS COCO, FastSAM achieves high scores at a significantly faster speed than [SAM](sam.md) on a single NVIDIA RTX 3090, demonstrating its efficiency and capability.

|

||||

|

||||

6. **Practical Applications:** The proposed approach provides a new, practical solution for a large number of vision tasks at a really high speed, tens or hundreds of times faster than current methods.

|

||||

|

||||

7. **Model Compression Feasibility:** FastSAM demonstrates the feasibility of a path that can significantly reduce the computational effort by introducing an artificial prior to the structure, thus opening new possibilities for large model architecture for general vision tasks.

|

||||

|

||||

## Available Models, Supported Tasks, and Operating Modes

|

||||

|

||||

This table presents the available models with their specific pre-trained weights, the tasks they support, and their compatibility with different operating modes like [Inference](../modes/predict.md), [Validation](../modes/val.md), [Training](../modes/train.md), and [Export](../modes/export.md), indicated by ✅ emojis for supported modes and ❌ emojis for unsupported modes.

|

||||

|

||||

| Model Type | Pre-trained Weights | Tasks Supported | Inference | Validation | Training | Export |

|

||||

|------------|---------------------------------------------------------------------------------------------|----------------------------------------------|-----------|------------|----------|--------|

|

||||

| FastSAM-s | [FastSAM-s.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/FastSAM-s.pt) | [Instance Segmentation](../tasks/segment.md) | ✅ | ❌ | ❌ | ✅ |

|

||||

| FastSAM-x | [FastSAM-x.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/FastSAM-x.pt) | [Instance Segmentation](../tasks/segment.md) | ✅ | ❌ | ❌ | ✅ |

|

||||

|

||||

## Usage Examples

|

||||

|

||||

The FastSAM models are easy to integrate into your Python applications. Ultralytics provides user-friendly Python API and CLI commands to streamline development.

|

||||

|

||||

### Predict Usage

|

||||

|

||||

To perform object detection on an image, use the `predict` method as shown below:

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import FastSAM

|

||||

from ultralytics.models.fastsam import FastSAMPrompt

|

||||

|

||||

# Define an inference source

|

||||

source = 'path/to/bus.jpg'

|

||||

|

||||

# Create a FastSAM model

|

||||

model = FastSAM('FastSAM-s.pt') # or FastSAM-x.pt

|

||||

|

||||

# Run inference on an image

|

||||

everything_results = model(source, device='cpu', retina_masks=True, imgsz=1024, conf=0.4, iou=0.9)

|

||||

|

||||

# Prepare a Prompt Process object

|

||||

prompt_process = FastSAMPrompt(source, everything_results, device='cpu')

|

||||

|

||||

# Everything prompt

|

||||

ann = prompt_process.everything_prompt()

|

||||

|

||||

# Bbox default shape [0,0,0,0] -> [x1,y1,x2,y2]

|

||||

ann = prompt_process.box_prompt(bbox=[200, 200, 300, 300])

|

||||

|

||||

# Text prompt

|

||||

ann = prompt_process.text_prompt(text='a photo of a dog')

|

||||

|

||||

# Point prompt

|

||||

# points default [[0,0]] [[x1,y1],[x2,y2]]

|

||||

# point_label default [0] [1,0] 0:background, 1:foreground

|

||||

ann = prompt_process.point_prompt(points=[[200, 200]], pointlabel=[1])

|

||||

prompt_process.plot(annotations=ann, output='./')

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

# Load a FastSAM model and segment everything with it

|

||||

yolo segment predict model=FastSAM-s.pt source=path/to/bus.jpg imgsz=640

|

||||

```

|

||||

|

||||

This snippet demonstrates the simplicity of loading a pre-trained model and running a prediction on an image.

|

||||

|

||||

### Val Usage

|

||||

|

||||

Validation of the model on a dataset can be done as follows:

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import FastSAM

|

||||

|

||||

# Create a FastSAM model

|

||||

model = FastSAM('FastSAM-s.pt') # or FastSAM-x.pt

|

||||

|

||||

# Validate the model

|

||||

results = model.val(data='coco8-seg.yaml')

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

# Load a FastSAM model and validate it on the COCO8 example dataset at image size 640

|

||||

yolo segment val model=FastSAM-s.pt data=coco8.yaml imgsz=640

|

||||

```

|

||||

|

||||

Please note that FastSAM only supports detection and segmentation of a single class of object. This means it will recognize and segment all objects as the same class. Therefore, when preparing the dataset, you need to convert all object category IDs to 0.

|

||||

|

||||

## FastSAM official Usage

|

||||

|

||||

FastSAM is also available directly from the [https://github.com/CASIA-IVA-Lab/FastSAM](https://github.com/CASIA-IVA-Lab/FastSAM) repository. Here is a brief overview of the typical steps you might take to use FastSAM:

|

||||

|

||||

### Installation

|

||||

|

||||

1. Clone the FastSAM repository:

|

||||

|

||||

```shell

|

||||

git clone https://github.com/CASIA-IVA-Lab/FastSAM.git

|

||||

```

|

||||

|

||||

2. Create and activate a Conda environment with Python 3.9:

|

||||

|

||||

```shell

|

||||

conda create -n FastSAM python=3.9

|

||||

conda activate FastSAM

|

||||

```

|

||||

|

||||

3. Navigate to the cloned repository and install the required packages:

|

||||

|

||||

```shell

|

||||

cd FastSAM

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

|

||||

4. Install the CLIP model:

|

||||

```shell

|

||||

pip install git+https://github.com/openai/CLIP.git

|

||||

```

|

||||

|

||||

### Example Usage

|

||||

|

||||

1. Download a [model checkpoint](https://drive.google.com/file/d/1m1sjY4ihXBU1fZXdQ-Xdj-mDltW-2Rqv/view?usp=sharing).

|

||||

|

||||

2. Use FastSAM for inference. Example commands:

|

||||

|

||||

- Segment everything in an image:

|

||||

|

||||

```shell

|

||||

python Inference.py --model_path ./weights/FastSAM.pt --img_path ./images/dogs.jpg

|

||||

```

|

||||

|

||||

- Segment specific objects using text prompt:

|

||||

|

||||

```shell

|

||||

python Inference.py --model_path ./weights/FastSAM.pt --img_path ./images/dogs.jpg --text_prompt "the yellow dog"

|

||||

```

|

||||

|

||||

- Segment objects within a bounding box (provide box coordinates in xywh format):

|

||||

|

||||

```shell

|

||||

python Inference.py --model_path ./weights/FastSAM.pt --img_path ./images/dogs.jpg --box_prompt "[570,200,230,400]"

|

||||

```

|

||||

|

||||

- Segment objects near specific points:

|

||||

```shell

|

||||

python Inference.py --model_path ./weights/FastSAM.pt --img_path ./images/dogs.jpg --point_prompt "[[520,360],[620,300]]" --point_label "[1,0]"

|

||||

```

|

||||

|

||||

Additionally, you can try FastSAM through a [Colab demo](https://colab.research.google.com/drive/1oX14f6IneGGw612WgVlAiy91UHwFAvr9?usp=sharing) or on the [HuggingFace web demo](https://huggingface.co/spaces/An-619/FastSAM) for a visual experience.

|

||||

|

||||

## Citations and Acknowledgements

|

||||

|

||||

We would like to acknowledge the FastSAM authors for their significant contributions in the field of real-time instance segmentation:

|

||||

|

||||

!!! Quote ""

|

||||

|

||||

=== "BibTeX"

|

||||

|

||||

```bibtex

|

||||

@misc{zhao2023fast,

|

||||

title={Fast Segment Anything},

|

||||

author={Xu Zhao and Wenchao Ding and Yongqi An and Yinglong Du and Tao Yu and Min Li and Ming Tang and Jinqiao Wang},

|

||||

year={2023},

|

||||

eprint={2306.12156},

|

||||

archivePrefix={arXiv},

|

||||

primaryClass={cs.CV}

|

||||

}

|

||||

```

|

||||

|

||||

The original FastSAM paper can be found on [arXiv](https://arxiv.org/abs/2306.12156). The authors have made their work publicly available, and the codebase can be accessed on [GitHub](https://github.com/CASIA-IVA-Lab/FastSAM). We appreciate their efforts in advancing the field and making their work accessible to the broader community.

|

||||

96

docs/en/models/index.md

Normal file

96

docs/en/models/index.md

Normal file

@ -0,0 +1,96 @@

|

||||

---

|

||||

comments: true

|

||||

description: Explore the diverse range of YOLO family, SAM, MobileSAM, FastSAM, YOLO-NAS, YOLO-World and RT-DETR models supported by Ultralytics. Get started with examples for both CLI and Python usage.

|

||||

keywords: Ultralytics, documentation, YOLO, SAM, MobileSAM, FastSAM, YOLO-NAS, RT-DETR, YOLO-World, models, architectures, Python, CLI

|

||||

---

|

||||

|

||||

# Models Supported by Ultralytics

|

||||

|

||||

Welcome to Ultralytics' model documentation! We offer support for a wide range of models, each tailored to specific tasks like [object detection](../tasks/detect.md), [instance segmentation](../tasks/segment.md), [image classification](../tasks/classify.md), [pose estimation](../tasks/pose.md), and [multi-object tracking](../modes/track.md). If you're interested in contributing your model architecture to Ultralytics, check out our [Contributing Guide](../help/contributing.md).

|

||||

|

||||

## Featured Models

|

||||

|

||||

Here are some of the key models supported:

|

||||

|

||||

1. **[YOLOv3](yolov3.md)**: The third iteration of the YOLO model family, originally by Joseph Redmon, known for its efficient real-time object detection capabilities.

|

||||

2. **[YOLOv4](yolov4.md)**: A darknet-native update to YOLOv3, released by Alexey Bochkovskiy in 2020.

|

||||

3. **[YOLOv5](yolov5.md)**: An improved version of the YOLO architecture by Ultralytics, offering better performance and speed trade-offs compared to previous versions.

|

||||

4. **[YOLOv6](yolov6.md)**: Released by [Meituan](https://about.meituan.com/) in 2022, and in use in many of the company's autonomous delivery robots.

|

||||

5. **[YOLOv7](yolov7.md)**: Updated YOLO models released in 2022 by the authors of YOLOv4.

|

||||

6. **[YOLOv8](yolov8.md) NEW 🚀**: The latest version of the YOLO family, featuring enhanced capabilities such as instance segmentation, pose/keypoints estimation, and classification.

|

||||

7. **[YOLOv9](yolov9.md)**: An experimental model trained on the Ultralytics [YOLOv5](yolov5.md) codebase implementing Programmable Gradient Information (PGI).

|

||||

8. **[Segment Anything Model (SAM)](sam.md)**: Meta's Segment Anything Model (SAM).

|

||||

9. **[Mobile Segment Anything Model (MobileSAM)](mobile-sam.md)**: MobileSAM for mobile applications, by Kyung Hee University.

|

||||

10. **[Fast Segment Anything Model (FastSAM)](fast-sam.md)**: FastSAM by Image & Video Analysis Group, Institute of Automation, Chinese Academy of Sciences.

|

||||

11. **[YOLO-NAS](yolo-nas.md)**: YOLO Neural Architecture Search (NAS) Models.

|

||||

12. **[Realtime Detection Transformers (RT-DETR)](rtdetr.md)**: Baidu's PaddlePaddle Realtime Detection Transformer (RT-DETR) models.

|

||||

13. **[YOLO-World](yolo-world.md)**: Real-time Open Vocabulary Object Detection models from Tencent AI Lab.

|

||||

|

||||

<p align="center">

|

||||

<br>

|

||||

<iframe loading="lazy" width="720" height="405" src="https://www.youtube.com/embed/MWq1UxqTClU?si=nHAW-lYDzrz68jR0"

|

||||

title="YouTube video player" frameborder="0"

|

||||

allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share"

|

||||

allowfullscreen>

|

||||

</iframe>

|

||||

<br>

|

||||

<strong>Watch:</strong> Run Ultralytics YOLO models in just a few lines of code.

|

||||

</p>

|

||||

|

||||

## Getting Started: Usage Examples

|

||||

|

||||

This example provides simple YOLO training and inference examples. For full documentation on these and other [modes](../modes/index.md) see the [Predict](../modes/predict.md), [Train](../modes/train.md), [Val](../modes/val.md) and [Export](../modes/export.md) docs pages.

|

||||

|

||||

Note the below example is for YOLOv8 [Detect](../tasks/detect.md) models for object detection. For additional supported tasks see the [Segment](../tasks/segment.md), [Classify](../tasks/classify.md) and [Pose](../tasks/pose.md) docs.

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

PyTorch pretrained `*.pt` models as well as configuration `*.yaml` files can be passed to the `YOLO()`, `SAM()`, `NAS()` and `RTDETR()` classes to create a model instance in Python:

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Load a COCO-pretrained YOLOv8n model

|

||||

model = YOLO('yolov8n.pt')

|

||||

|

||||

# Display model information (optional)

|

||||

model.info()

|

||||

|

||||

# Train the model on the COCO8 example dataset for 100 epochs

|

||||

results = model.train(data='coco8.yaml', epochs=100, imgsz=640)

|

||||

|

||||

# Run inference with the YOLOv8n model on the 'bus.jpg' image

|

||||

results = model('path/to/bus.jpg')

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

CLI commands are available to directly run the models:

|

||||

|

||||

```bash

|

||||

# Load a COCO-pretrained YOLOv8n model and train it on the COCO8 example dataset for 100 epochs

|

||||

yolo train model=yolov8n.pt data=coco8.yaml epochs=100 imgsz=640

|

||||

|

||||

# Load a COCO-pretrained YOLOv8n model and run inference on the 'bus.jpg' image

|

||||

yolo predict model=yolov8n.pt source=path/to/bus.jpg

|

||||

```

|

||||

|

||||

## Contributing New Models

|

||||

|

||||

Interested in contributing your model to Ultralytics? Great! We're always open to expanding our model portfolio.

|

||||

|

||||

1. **Fork the Repository**: Start by forking the [Ultralytics GitHub repository](https://github.com/ultralytics/ultralytics).

|

||||

|

||||

2. **Clone Your Fork**: Clone your fork to your local machine and create a new branch to work on.

|

||||

|

||||

3. **Implement Your Model**: Add your model following the coding standards and guidelines provided in our [Contributing Guide](../help/contributing.md).

|

||||

|

||||

4. **Test Thoroughly**: Make sure to test your model rigorously, both in isolation and as part of the pipeline.

|

||||

|

||||

5. **Create a Pull Request**: Once you're satisfied with your model, create a pull request to the main repository for review.

|

||||

|

||||

6. **Code Review & Merging**: After review, if your model meets our criteria, it will be merged into the main repository.

|

||||

|

||||

For detailed steps, consult our [Contributing Guide](../help/contributing.md).

|

||||

119

docs/en/models/mobile-sam.md

Normal file

119

docs/en/models/mobile-sam.md

Normal file

@ -0,0 +1,119 @@

|

||||

---

|

||||

comments: true

|

||||

description: Learn more about MobileSAM, its implementation, comparison with the original SAM, and how to download and test it in the Ultralytics framework. Improve your mobile applications today.

|

||||

keywords: MobileSAM, Ultralytics, SAM, mobile applications, Arxiv, GPU, API, image encoder, mask decoder, model download, testing method

|

||||

---

|

||||

|

||||

|

||||

|

||||

# Mobile Segment Anything (MobileSAM)

|

||||

|

||||

The MobileSAM paper is now available on [arXiv](https://arxiv.org/pdf/2306.14289.pdf).

|

||||

|

||||

A demonstration of MobileSAM running on a CPU can be accessed at this [demo link](https://huggingface.co/spaces/dhkim2810/MobileSAM). The performance on a Mac i5 CPU takes approximately 3 seconds. On the Hugging Face demo, the interface and lower-performance CPUs contribute to a slower response, but it continues to function effectively.

|

||||

|

||||

MobileSAM is implemented in various projects including [Grounding-SAM](https://github.com/IDEA-Research/Grounded-Segment-Anything), [AnyLabeling](https://github.com/vietanhdev/anylabeling), and [Segment Anything in 3D](https://github.com/Jumpat/SegmentAnythingin3D).

|

||||

|

||||

MobileSAM is trained on a single GPU with a 100k dataset (1% of the original images) in less than a day. The code for this training will be made available in the future.

|

||||

|

||||

## Available Models, Supported Tasks, and Operating Modes

|

||||

|

||||

This table presents the available models with their specific pre-trained weights, the tasks they support, and their compatibility with different operating modes like [Inference](../modes/predict.md), [Validation](../modes/val.md), [Training](../modes/train.md), and [Export](../modes/export.md), indicated by ✅ emojis for supported modes and ❌ emojis for unsupported modes.

|

||||

|

||||

| Model Type | Pre-trained Weights | Tasks Supported | Inference | Validation | Training | Export |

|

||||

|------------|-----------------------------------------------------------------------------------------------|----------------------------------------------|-----------|------------|----------|--------|

|

||||

| MobileSAM | [mobile_sam.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/mobile_sam.pt) | [Instance Segmentation](../tasks/segment.md) | ✅ | ❌ | ❌ | ❌ |

|

||||

|

||||

## Adapting from SAM to MobileSAM

|

||||

|

||||

Since MobileSAM retains the same pipeline as the original SAM, we have incorporated the original's pre-processing, post-processing, and all other interfaces. Consequently, those currently using the original SAM can transition to MobileSAM with minimal effort.

|

||||

|

||||

MobileSAM performs comparably to the original SAM and retains the same pipeline except for a change in the image encoder. Specifically, we replace the original heavyweight ViT-H encoder (632M) with a smaller Tiny-ViT (5M). On a single GPU, MobileSAM operates at about 12ms per image: 8ms on the image encoder and 4ms on the mask decoder.

|

||||

|

||||

The following table provides a comparison of ViT-based image encoders:

|

||||

|

||||

| Image Encoder | Original SAM | MobileSAM |

|

||||

|---------------|--------------|-----------|

|

||||

| Parameters | 611M | 5M |

|

||||

| Speed | 452ms | 8ms |

|

||||

|

||||

Both the original SAM and MobileSAM utilize the same prompt-guided mask decoder:

|

||||

|

||||

| Mask Decoder | Original SAM | MobileSAM |

|

||||

|--------------|--------------|-----------|

|

||||

| Parameters | 3.876M | 3.876M |

|

||||

| Speed | 4ms | 4ms |

|

||||

|

||||

Here is the comparison of the whole pipeline:

|

||||

|

||||

| Whole Pipeline (Enc+Dec) | Original SAM | MobileSAM |

|

||||

|--------------------------|--------------|-----------|

|

||||

| Parameters | 615M | 9.66M |

|

||||

| Speed | 456ms | 12ms |

|

||||

|

||||

The performance of MobileSAM and the original SAM are demonstrated using both a point and a box as prompts.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

With its superior performance, MobileSAM is approximately 5 times smaller and 7 times faster than the current FastSAM. More details are available at the [MobileSAM project page](https://github.com/ChaoningZhang/MobileSAM).

|

||||

|

||||

## Testing MobileSAM in Ultralytics

|

||||

|

||||

Just like the original SAM, we offer a straightforward testing method in Ultralytics, including modes for both Point and Box prompts.

|

||||

|

||||

### Model Download

|

||||

|

||||

You can download the model [here](https://github.com/ChaoningZhang/MobileSAM/blob/master/weights/mobile_sam.pt).

|

||||

|

||||

### Point Prompt

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import SAM

|

||||

|

||||

# Load the model

|

||||

model = SAM('mobile_sam.pt')

|

||||

|

||||

# Predict a segment based on a point prompt

|

||||

model.predict('ultralytics/assets/zidane.jpg', points=[900, 370], labels=[1])

|

||||

```

|

||||

|

||||

### Box Prompt

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import SAM

|

||||

|

||||

# Load the model

|

||||

model = SAM('mobile_sam.pt')

|

||||

|

||||

# Predict a segment based on a box prompt

|

||||

model.predict('ultralytics/assets/zidane.jpg', bboxes=[439, 437, 524, 709])

|

||||

```

|

||||

|

||||

We have implemented `MobileSAM` and `SAM` using the same API. For more usage information, please see the [SAM page](sam.md).

|

||||

|

||||

## Citations and Acknowledgements

|

||||

|

||||

If you find MobileSAM useful in your research or development work, please consider citing our paper:

|

||||

|

||||

!!! Quote ""

|

||||

|

||||

=== "BibTeX"

|

||||

|

||||

```bibtex

|

||||

@article{mobile_sam,

|

||||

title={Faster Segment Anything: Towards Lightweight SAM for Mobile Applications},

|

||||

author={Zhang, Chaoning and Han, Dongshen and Qiao, Yu and Kim, Jung Uk and Bae, Sung Ho and Lee, Seungkyu and Hong, Choong Seon},

|

||||

journal={arXiv preprint arXiv:2306.14289},

|

||||

year={2023}

|

||||

}

|

||||

```

|

||||

92

docs/en/models/rtdetr.md

Normal file

92

docs/en/models/rtdetr.md

Normal file

@ -0,0 +1,92 @@

|

||||

---

|

||||

comments: true

|

||||

description: Discover the features and benefits of RT-DETR, Baidu’s efficient and adaptable real-time object detector powered by Vision Transformers, including pre-trained models.

|

||||

keywords: RT-DETR, Baidu, Vision Transformers, object detection, real-time performance, CUDA, TensorRT, IoU-aware query selection, Ultralytics, Python API, PaddlePaddle

|

||||

---

|

||||

|

||||

# Baidu's RT-DETR: A Vision Transformer-Based Real-Time Object Detector

|

||||

|

||||

## Overview

|

||||

|

||||

Real-Time Detection Transformer (RT-DETR), developed by Baidu, is a cutting-edge end-to-end object detector that provides real-time performance while maintaining high accuracy. It leverages the power of Vision Transformers (ViT) to efficiently process multiscale features by decoupling intra-scale interaction and cross-scale fusion. RT-DETR is highly adaptable, supporting flexible adjustment of inference speed using different decoder layers without retraining. The model excels on accelerated backends like CUDA with TensorRT, outperforming many other real-time object detectors.

|

||||

|

||||

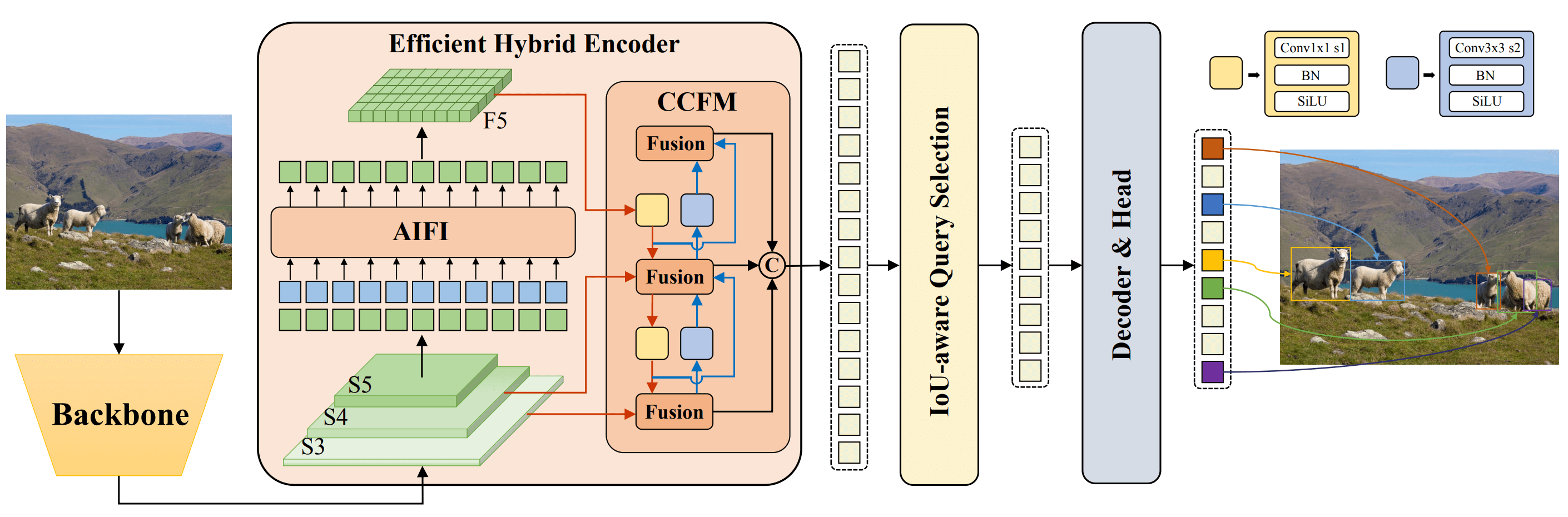

**Overview of Baidu's RT-DETR.** The RT-DETR model architecture diagram shows the last three stages of the backbone {S3, S4, S5} as the input to the encoder. The efficient hybrid encoder transforms multiscale features into a sequence of image features through intrascale feature interaction (AIFI) and cross-scale feature-fusion module (CCFM). The IoU-aware query selection is employed to select a fixed number of image features to serve as initial object queries for the decoder. Finally, the decoder with auxiliary prediction heads iteratively optimizes object queries to generate boxes and confidence scores ([source](https://arxiv.org/pdf/2304.08069.pdf)).

|

||||

|

||||

### Key Features

|

||||

|

||||

- **Efficient Hybrid Encoder:** Baidu's RT-DETR uses an efficient hybrid encoder that processes multiscale features by decoupling intra-scale interaction and cross-scale fusion. This unique Vision Transformers-based design reduces computational costs and allows for real-time object detection.

|

||||

- **IoU-aware Query Selection:** Baidu's RT-DETR improves object query initialization by utilizing IoU-aware query selection. This allows the model to focus on the most relevant objects in the scene, enhancing the detection accuracy.

|

||||

- **Adaptable Inference Speed:** Baidu's RT-DETR supports flexible adjustments of inference speed by using different decoder layers without the need for retraining. This adaptability facilitates practical application in various real-time object detection scenarios.

|

||||

|

||||

## Pre-trained Models

|

||||

|

||||

The Ultralytics Python API provides pre-trained PaddlePaddle RT-DETR models with different scales:

|

||||

|

||||

- RT-DETR-L: 53.0% AP on COCO val2017, 114 FPS on T4 GPU

|

||||

- RT-DETR-X: 54.8% AP on COCO val2017, 74 FPS on T4 GPU

|

||||

|

||||

## Usage Examples

|

||||

|

||||

This example provides simple RT-DETR training and inference examples. For full documentation on these and other [modes](../modes/index.md) see the [Predict](../modes/predict.md), [Train](../modes/train.md), [Val](../modes/val.md) and [Export](../modes/export.md) docs pages.

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import RTDETR

|

||||

|

||||

# Load a COCO-pretrained RT-DETR-l model

|

||||

model = RTDETR('rtdetr-l.pt')

|

||||

|

||||

# Display model information (optional)

|

||||

model.info()

|

||||

|

||||

# Train the model on the COCO8 example dataset for 100 epochs

|

||||

results = model.train(data='coco8.yaml', epochs=100, imgsz=640)

|

||||

|

||||

# Run inference with the RT-DETR-l model on the 'bus.jpg' image

|

||||

results = model('path/to/bus.jpg')

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

# Load a COCO-pretrained RT-DETR-l model and train it on the COCO8 example dataset for 100 epochs

|

||||

yolo train model=rtdetr-l.pt data=coco8.yaml epochs=100 imgsz=640

|

||||

|

||||

# Load a COCO-pretrained RT-DETR-l model and run inference on the 'bus.jpg' image

|

||||

yolo predict model=rtdetr-l.pt source=path/to/bus.jpg

|

||||

```

|

||||

|

||||

## Supported Tasks and Modes

|

||||

|

||||

This table presents the model types, the specific pre-trained weights, the tasks supported by each model, and the various modes ([Train](../modes/train.md) , [Val](../modes/val.md), [Predict](../modes/predict.md), [Export](../modes/export.md)) that are supported, indicated by ✅ emojis.

|

||||

|

||||

| Model Type | Pre-trained Weights | Tasks Supported | Inference | Validation | Training | Export |

|

||||

|---------------------|-------------------------------------------------------------------------------------------|----------------------------------------|-----------|------------|----------|--------|

|

||||

| RT-DETR Large | [rtdetr-l.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/rtdetr-l.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ✅ | ✅ |

|

||||

| RT-DETR Extra-Large | [rtdetr-x.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/rtdetr-x.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ✅ | ✅ |

|

||||

|

||||

## Citations and Acknowledgements

|

||||

|

||||

If you use Baidu's RT-DETR in your research or development work, please cite the [original paper](https://arxiv.org/abs/2304.08069):

|

||||

|

||||

!!! Quote ""

|

||||

|

||||

=== "BibTeX"

|

||||

|

||||

```bibtex

|

||||

@misc{lv2023detrs,

|

||||

title={DETRs Beat YOLOs on Real-time Object Detection},

|

||||

author={Wenyu Lv and Shangliang Xu and Yian Zhao and Guanzhong Wang and Jinman Wei and Cheng Cui and Yuning Du and Qingqing Dang and Yi Liu},

|

||||

year={2023},

|

||||

eprint={2304.08069},

|

||||

archivePrefix={arXiv},

|

||||

primaryClass={cs.CV}

|

||||

}

|

||||

```

|

||||

|

||||

We would like to acknowledge Baidu and the [PaddlePaddle](https://github.com/PaddlePaddle/PaddleDetection) team for creating and maintaining this valuable resource for the computer vision community. Their contribution to the field with the development of the Vision Transformers-based real-time object detector, RT-DETR, is greatly appreciated.

|

||||

|

||||

_Keywords: RT-DETR, Transformer, ViT, Vision Transformers, Baidu RT-DETR, PaddlePaddle, Paddle Paddle RT-DETR, real-time object detection, Vision Transformers-based object detection, pre-trained PaddlePaddle RT-DETR models, Baidu's RT-DETR usage, Ultralytics Python API_

|

||||

227

docs/en/models/sam.md

Normal file

227

docs/en/models/sam.md

Normal file

@ -0,0 +1,227 @@

|

||||

---

|

||||

comments: true

|

||||

description: Explore the cutting-edge Segment Anything Model (SAM) from Ultralytics that allows real-time image segmentation. Learn about its promptable segmentation, zero-shot performance, and how to use it.

|

||||

keywords: Ultralytics, image segmentation, Segment Anything Model, SAM, SA-1B dataset, real-time performance, zero-shot transfer, object detection, image analysis, machine learning

|

||||

---

|

||||

|

||||

# Segment Anything Model (SAM)

|

||||

|

||||

Welcome to the frontier of image segmentation with the Segment Anything Model, or SAM. This revolutionary model has changed the game by introducing promptable image segmentation with real-time performance, setting new standards in the field.

|

||||

|

||||

## Introduction to SAM: The Segment Anything Model

|

||||

|

||||

The Segment Anything Model, or SAM, is a cutting-edge image segmentation model that allows for promptable segmentation, providing unparalleled versatility in image analysis tasks. SAM forms the heart of the Segment Anything initiative, a groundbreaking project that introduces a novel model, task, and dataset for image segmentation.

|

||||

|

||||

SAM's advanced design allows it to adapt to new image distributions and tasks without prior knowledge, a feature known as zero-shot transfer. Trained on the expansive [SA-1B dataset](https://ai.facebook.com/datasets/segment-anything/), which contains more than 1 billion masks spread over 11 million carefully curated images, SAM has displayed impressive zero-shot performance, surpassing previous fully supervised results in many cases.

|

||||

|

||||

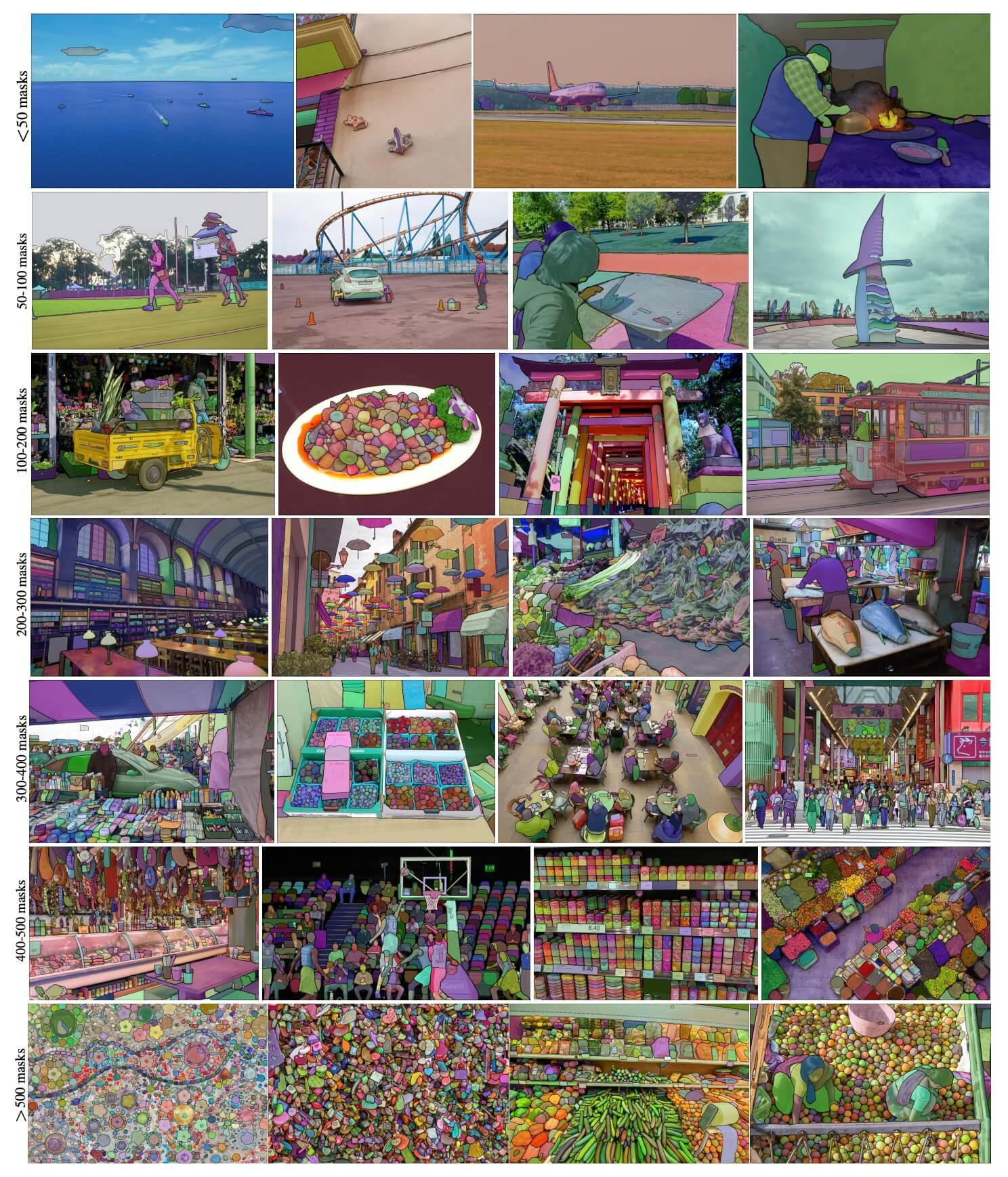

**SA-1B Example images.** Dataset images overlaid masks from the newly introduced SA-1B dataset. SA-1B contains 11M diverse, high-resolution, licensed, and privacy protecting images and 1.1B high-quality segmentation masks. These masks were annotated fully automatically by SAM, and as verified by human ratings and numerous experiments, are of high quality and diversity. Images are grouped by number of masks per image for visualization (there are ∼100 masks per image on average).

|

||||

|

||||

## Key Features of the Segment Anything Model (SAM)

|

||||

|

||||

- **Promptable Segmentation Task:** SAM was designed with a promptable segmentation task in mind, allowing it to generate valid segmentation masks from any given prompt, such as spatial or text clues identifying an object.

|

||||

- **Advanced Architecture:** The Segment Anything Model employs a powerful image encoder, a prompt encoder, and a lightweight mask decoder. This unique architecture enables flexible prompting, real-time mask computation, and ambiguity awareness in segmentation tasks.

|

||||

- **The SA-1B Dataset:** Introduced by the Segment Anything project, the SA-1B dataset features over 1 billion masks on 11 million images. As the largest segmentation dataset to date, it provides SAM with a diverse and large-scale training data source.

|

||||

- **Zero-Shot Performance:** SAM displays outstanding zero-shot performance across various segmentation tasks, making it a ready-to-use tool for diverse applications with minimal need for prompt engineering.

|

||||

|

||||

For an in-depth look at the Segment Anything Model and the SA-1B dataset, please visit the [Segment Anything website](https://segment-anything.com) and check out the research paper [Segment Anything](https://arxiv.org/abs/2304.02643).

|

||||

|

||||

## Available Models, Supported Tasks, and Operating Modes

|

||||

|

||||

This table presents the available models with their specific pre-trained weights, the tasks they support, and their compatibility with different operating modes like [Inference](../modes/predict.md), [Validation](../modes/val.md), [Training](../modes/train.md), and [Export](../modes/export.md), indicated by ✅ emojis for supported modes and ❌ emojis for unsupported modes.

|

||||

|

||||

| Model Type | Pre-trained Weights | Tasks Supported | Inference | Validation | Training | Export |

|

||||

|------------|-------------------------------------------------------------------------------------|----------------------------------------------|-----------|------------|----------|--------|

|

||||

| SAM base | [sam_b.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/sam_b.pt) | [Instance Segmentation](../tasks/segment.md) | ✅ | ❌ | ❌ | ❌ |

|

||||

| SAM large | [sam_l.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/sam_l.pt) | [Instance Segmentation](../tasks/segment.md) | ✅ | ❌ | ❌ | ❌ |

|

||||

|

||||

## How to Use SAM: Versatility and Power in Image Segmentation

|

||||

|

||||

The Segment Anything Model can be employed for a multitude of downstream tasks that go beyond its training data. This includes edge detection, object proposal generation, instance segmentation, and preliminary text-to-mask prediction. With prompt engineering, SAM can swiftly adapt to new tasks and data distributions in a zero-shot manner, establishing it as a versatile and potent tool for all your image segmentation needs.

|

||||

|

||||

### SAM prediction example

|

||||

|

||||

!!! Example "Segment with prompts"

|

||||

|

||||

Segment image with given prompts.

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import SAM

|

||||

|

||||

# Load a model

|

||||

model = SAM('sam_b.pt')

|

||||

|

||||

# Display model information (optional)

|

||||

model.info()

|

||||

|

||||

# Run inference with bboxes prompt

|

||||

model('ultralytics/assets/zidane.jpg', bboxes=[439, 437, 524, 709])

|

||||

|

||||

# Run inference with points prompt

|

||||

model('ultralytics/assets/zidane.jpg', points=[900, 370], labels=[1])

|

||||

```

|

||||

|

||||

!!! Example "Segment everything"

|

||||

|

||||

Segment the whole image.

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import SAM

|

||||

|

||||

# Load a model

|

||||

model = SAM('sam_b.pt')

|

||||

|

||||

# Display model information (optional)

|

||||

model.info()

|

||||

|

||||

# Run inference

|

||||

model('path/to/image.jpg')

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

# Run inference with a SAM model

|

||||

yolo predict model=sam_b.pt source=path/to/image.jpg

|

||||

```

|

||||

|

||||

- The logic here is to segment the whole image if you don't pass any prompts(bboxes/points/masks).

|

||||

|

||||

!!! Example "SAMPredictor example"

|

||||

|

||||

This way you can set image once and run prompts inference multiple times without running image encoder multiple times.

|

||||

|

||||

=== "Prompt inference"

|

||||

|

||||

```python

|

||||

from ultralytics.models.sam import Predictor as SAMPredictor

|

||||

|

||||

# Create SAMPredictor

|

||||

overrides = dict(conf=0.25, task='segment', mode='predict', imgsz=1024, model="mobile_sam.pt")

|

||||

predictor = SAMPredictor(overrides=overrides)

|

||||

|

||||

# Set image

|

||||

predictor.set_image("ultralytics/assets/zidane.jpg") # set with image file

|

||||

predictor.set_image(cv2.imread("ultralytics/assets/zidane.jpg")) # set with np.ndarray

|

||||

results = predictor(bboxes=[439, 437, 524, 709])

|

||||

results = predictor(points=[900, 370], labels=[1])

|

||||

|

||||

# Reset image

|

||||

predictor.reset_image()

|

||||

```

|

||||

|

||||

Segment everything with additional args.

|

||||

|

||||

=== "Segment everything"

|

||||

|

||||

```python

|

||||

from ultralytics.models.sam import Predictor as SAMPredictor

|

||||

|

||||

# Create SAMPredictor

|

||||

overrides = dict(conf=0.25, task='segment', mode='predict', imgsz=1024, model="mobile_sam.pt")

|

||||

predictor = SAMPredictor(overrides=overrides)

|

||||

|

||||

# Segment with additional args

|

||||

results = predictor(source="ultralytics/assets/zidane.jpg", crop_n_layers=1, points_stride=64)

|

||||

```

|

||||

|

||||

- More additional args for `Segment everything` see [`Predictor/generate` Reference](../reference/models/sam/predict.md).

|

||||

|

||||

## SAM comparison vs YOLOv8

|

||||

|

||||

Here we compare Meta's smallest SAM model, SAM-b, with Ultralytics smallest segmentation model, [YOLOv8n-seg](../tasks/segment.md):

|

||||

|

||||

| Model | Size | Parameters | Speed (CPU) |

|

||||

|------------------------------------------------|----------------------------|------------------------|----------------------------|

|

||||

| Meta's SAM-b | 358 MB | 94.7 M | 51096 ms/im |

|

||||

| [MobileSAM](mobile-sam.md) | 40.7 MB | 10.1 M | 46122 ms/im |

|

||||

| [FastSAM-s](fast-sam.md) with YOLOv8 backbone | 23.7 MB | 11.8 M | 115 ms/im |

|

||||

| Ultralytics [YOLOv8n-seg](../tasks/segment.md) | **6.7 MB** (53.4x smaller) | **3.4 M** (27.9x less) | **59 ms/im** (866x faster) |

|

||||

|

||||

This comparison shows the order-of-magnitude differences in the model sizes and speeds between models. Whereas SAM presents unique capabilities for automatic segmenting, it is not a direct competitor to YOLOv8 segment models, which are smaller, faster and more efficient.

|

||||

|

||||

Tests run on a 2023 Apple M2 Macbook with 16GB of RAM. To reproduce this test:

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import FastSAM, SAM, YOLO

|

||||

|

||||

# Profile SAM-b

|

||||

model = SAM('sam_b.pt')

|

||||

model.info()

|

||||

model('ultralytics/assets')

|

||||

|

||||

# Profile MobileSAM

|

||||

model = SAM('mobile_sam.pt')

|

||||

model.info()

|

||||

model('ultralytics/assets')

|

||||

|

||||

# Profile FastSAM-s

|

||||

model = FastSAM('FastSAM-s.pt')

|

||||

model.info()

|

||||

model('ultralytics/assets')

|

||||

|

||||

# Profile YOLOv8n-seg

|

||||

model = YOLO('yolov8n-seg.pt')

|

||||

model.info()

|

||||

model('ultralytics/assets')

|

||||

```

|

||||

|

||||

## Auto-Annotation: A Quick Path to Segmentation Datasets

|

||||

|

||||

Auto-annotation is a key feature of SAM, allowing users to generate a [segmentation dataset](https://docs.ultralytics.com/datasets/segment) using a pre-trained detection model. This feature enables rapid and accurate annotation of a large number of images, bypassing the need for time-consuming manual labeling.

|

||||

|

||||

### Generate Your Segmentation Dataset Using a Detection Model

|

||||

|

||||

To auto-annotate your dataset with the Ultralytics framework, use the `auto_annotate` function as shown below:

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics.data.annotator import auto_annotate

|

||||

|

||||

auto_annotate(data="path/to/images", det_model="yolov8x.pt", sam_model='sam_b.pt')

|

||||

```

|

||||

|

||||

| Argument | Type | Description | Default |

|

||||

|------------|---------------------|---------------------------------------------------------------------------------------------------------|--------------|

|

||||

| data | str | Path to a folder containing images to be annotated. | |

|

||||

| det_model | str, optional | Pre-trained YOLO detection model. Defaults to 'yolov8x.pt'. | 'yolov8x.pt' |

|

||||

| sam_model | str, optional | Pre-trained SAM segmentation model. Defaults to 'sam_b.pt'. | 'sam_b.pt' |

|

||||

| device | str, optional | Device to run the models on. Defaults to an empty string (CPU or GPU, if available). | |

|

||||

| output_dir | str, None, optional | Directory to save the annotated results. Defaults to a 'labels' folder in the same directory as 'data'. | None |

|

||||

|

||||

The `auto_annotate` function takes the path to your images, with optional arguments for specifying the pre-trained detection and SAM segmentation models, the device to run the models on, and the output directory for saving the annotated results.

|

||||

|

||||

Auto-annotation with pre-trained models can dramatically cut down the time and effort required for creating high-quality segmentation datasets. This feature is especially beneficial for researchers and developers dealing with large image collections, as it allows them to focus on model development and evaluation rather than manual annotation.

|

||||

|

||||

## Citations and Acknowledgements

|

||||

|

||||

If you find SAM useful in your research or development work, please consider citing our paper:

|

||||

|

||||

!!! Quote ""

|

||||

|

||||

=== "BibTeX"

|

||||

|

||||

```bibtex

|

||||

@misc{kirillov2023segment,

|

||||

title={Segment Anything},

|

||||

author={Alexander Kirillov and Eric Mintun and Nikhila Ravi and Hanzi Mao and Chloe Rolland and Laura Gustafson and Tete Xiao and Spencer Whitehead and Alexander C. Berg and Wan-Yen Lo and Piotr Dollár and Ross Girshick},

|

||||

year={2023},

|

||||

eprint={2304.02643},

|

||||

archivePrefix={arXiv},

|

||||

primaryClass={cs.CV}

|

||||

}

|

||||

```

|

||||

|

||||

We would like to express our gratitude to Meta AI for creating and maintaining this valuable resource for the computer vision community.

|

||||

|

||||

_keywords: Segment Anything, Segment Anything Model, SAM, Meta SAM, image segmentation, promptable segmentation, zero-shot performance, SA-1B dataset, advanced architecture, auto-annotation, Ultralytics, pre-trained models, SAM base, SAM large, instance segmentation, computer vision, AI, artificial intelligence, machine learning, data annotation, segmentation masks, detection model, YOLO detection model, bibtex, Meta AI._

|

||||

120

docs/en/models/yolo-nas.md

Normal file

120

docs/en/models/yolo-nas.md

Normal file

@ -0,0 +1,120 @@

|

||||

---

|

||||

comments: true

|

||||

description: Explore detailed documentation of YOLO-NAS, a superior object detection model. Learn about its features, pre-trained models, usage with Ultralytics Python API, and more.

|

||||

keywords: YOLO-NAS, Deci AI, object detection, deep learning, neural architecture search, Ultralytics Python API, YOLO model, pre-trained models, quantization, optimization, COCO, Objects365, Roboflow 100

|

||||

---

|

||||

|

||||

# YOLO-NAS

|

||||

|

||||

## Overview

|

||||

|

||||

Developed by Deci AI, YOLO-NAS is a groundbreaking object detection foundational model. It is the product of advanced Neural Architecture Search technology, meticulously designed to address the limitations of previous YOLO models. With significant improvements in quantization support and accuracy-latency trade-offs, YOLO-NAS represents a major leap in object detection.

|

||||

|

||||

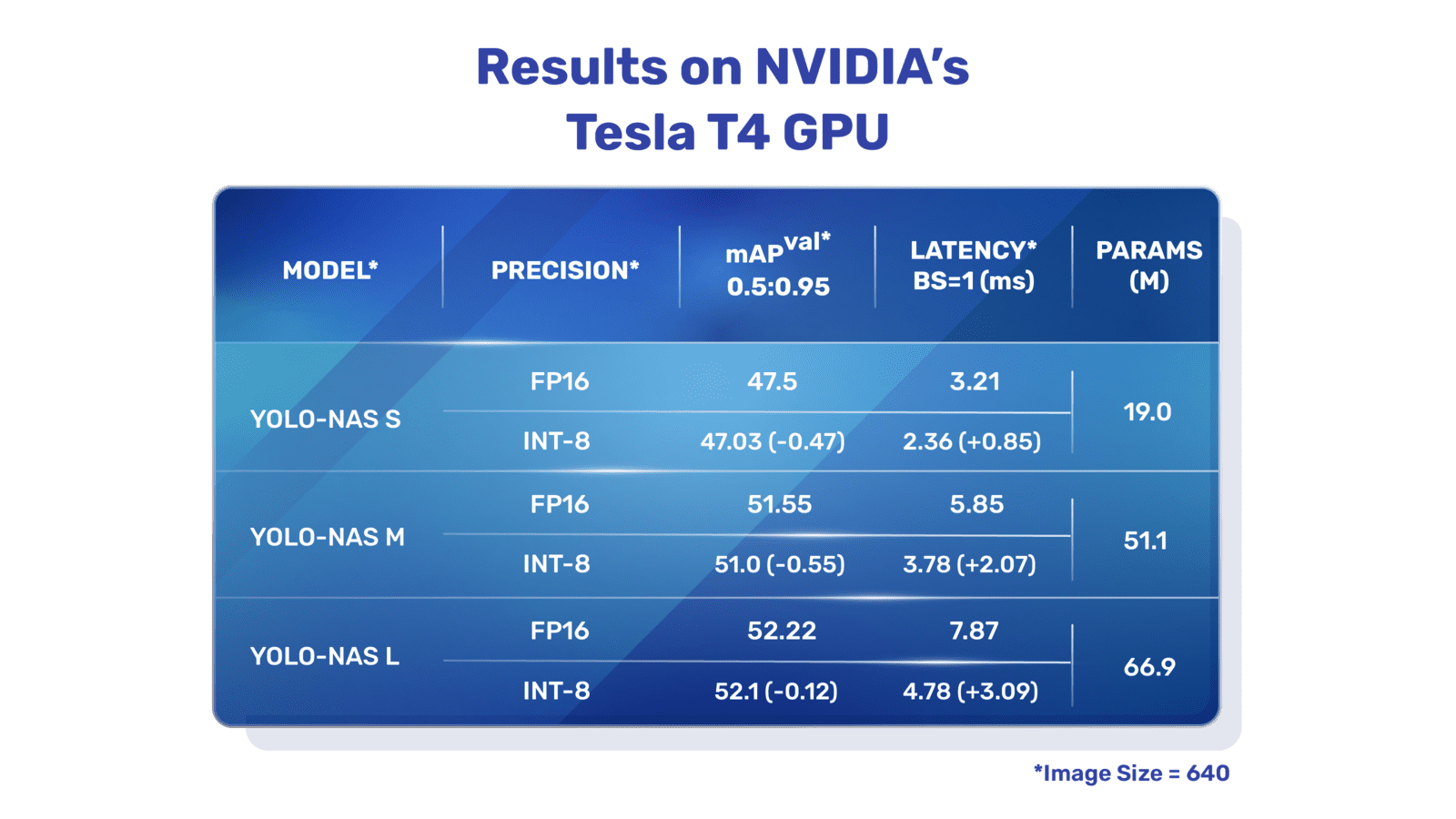

**Overview of YOLO-NAS.** YOLO-NAS employs quantization-aware blocks and selective quantization for optimal performance. The model, when converted to its INT8 quantized version, experiences a minimal precision drop, a significant improvement over other models. These advancements culminate in a superior architecture with unprecedented object detection capabilities and outstanding performance.

|

||||

|

||||

### Key Features

|

||||

|

||||

- **Quantization-Friendly Basic Block:** YOLO-NAS introduces a new basic block that is friendly to quantization, addressing one of the significant limitations of previous YOLO models.

|

||||

- **Sophisticated Training and Quantization:** YOLO-NAS leverages advanced training schemes and post-training quantization to enhance performance.

|

||||

- **AutoNAC Optimization and Pre-training:** YOLO-NAS utilizes AutoNAC optimization and is pre-trained on prominent datasets such as COCO, Objects365, and Roboflow 100. This pre-training makes it extremely suitable for downstream object detection tasks in production environments.

|

||||

|

||||

## Pre-trained Models

|

||||

|

||||

Experience the power of next-generation object detection with the pre-trained YOLO-NAS models provided by Ultralytics. These models are designed to deliver top-notch performance in terms of both speed and accuracy. Choose from a variety of options tailored to your specific needs:

|

||||

|

||||

| Model | mAP | Latency (ms) |

|

||||

|------------------|-------|--------------|

|

||||

| YOLO-NAS S | 47.5 | 3.21 |

|

||||

| YOLO-NAS M | 51.55 | 5.85 |

|

||||

| YOLO-NAS L | 52.22 | 7.87 |

|

||||

| YOLO-NAS S INT-8 | 47.03 | 2.36 |

|

||||

| YOLO-NAS M INT-8 | 51.0 | 3.78 |

|

||||

| YOLO-NAS L INT-8 | 52.1 | 4.78 |

|

||||

|

||||

Each model variant is designed to offer a balance between Mean Average Precision (mAP) and latency, helping you optimize your object detection tasks for both performance and speed.

|

||||

|

||||

## Usage Examples

|

||||

|

||||

Ultralytics has made YOLO-NAS models easy to integrate into your Python applications via our `ultralytics` python package. The package provides a user-friendly Python API to streamline the process.

|

||||

|

||||

The following examples show how to use YOLO-NAS models with the `ultralytics` package for inference and validation:

|

||||

|

||||

### Inference and Validation Examples

|

||||

|

||||

In this example we validate YOLO-NAS-s on the COCO8 dataset.

|

||||

|

||||

!!! Example

|

||||

|

||||

This example provides simple inference and validation code for YOLO-NAS. For handling inference results see [Predict](../modes/predict.md) mode. For using YOLO-NAS with additional modes see [Val](../modes/val.md) and [Export](../modes/export.md). YOLO-NAS on the `ultralytics` package does not support training.

|

||||

|

||||

=== "Python"

|

||||

|

||||

PyTorch pretrained `*.pt` models files can be passed to the `NAS()` class to create a model instance in python:

|

||||

|

||||

```python

|

||||

from ultralytics import NAS

|

||||

|

||||

# Load a COCO-pretrained YOLO-NAS-s model

|

||||

model = NAS('yolo_nas_s.pt')

|

||||

|

||||

# Display model information (optional)

|

||||

model.info()

|

||||

|

||||

# Validate the model on the COCO8 example dataset

|

||||

results = model.val(data='coco8.yaml')

|

||||

|

||||

# Run inference with the YOLO-NAS-s model on the 'bus.jpg' image

|

||||

results = model('path/to/bus.jpg')

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

CLI commands are available to directly run the models:

|

||||

|

||||

```bash

|

||||

# Load a COCO-pretrained YOLO-NAS-s model and validate it's performance on the COCO8 example dataset

|

||||

yolo val model=yolo_nas_s.pt data=coco8.yaml

|

||||

|

||||

# Load a COCO-pretrained YOLO-NAS-s model and run inference on the 'bus.jpg' image

|

||||

yolo predict model=yolo_nas_s.pt source=path/to/bus.jpg

|

||||

```

|

||||

|

||||

## Supported Tasks and Modes

|

||||

|

||||

We offer three variants of the YOLO-NAS models: Small (s), Medium (m), and Large (l). Each variant is designed to cater to different computational and performance needs:

|

||||

|

||||

- **YOLO-NAS-s**: Optimized for environments where computational resources are limited but efficiency is key.

|

||||

- **YOLO-NAS-m**: Offers a balanced approach, suitable for general-purpose object detection with higher accuracy.

|

||||

- **YOLO-NAS-l**: Tailored for scenarios requiring the highest accuracy, where computational resources are less of a constraint.

|

||||

|

||||

Below is a detailed overview of each model, including links to their pre-trained weights, the tasks they support, and their compatibility with different operating modes.

|

||||

|

||||

| Model Type | Pre-trained Weights | Tasks Supported | Inference | Validation | Training | Export |

|

||||

|------------|-----------------------------------------------------------------------------------------------|----------------------------------------|-----------|------------|----------|--------|

|

||||

| YOLO-NAS-s | [yolo_nas_s.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolo_nas_s.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ✅ |

|

||||

| YOLO-NAS-m | [yolo_nas_m.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolo_nas_m.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ✅ |

|

||||

| YOLO-NAS-l | [yolo_nas_l.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolo_nas_l.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ✅ |

|

||||

|

||||

## Citations and Acknowledgements

|

||||

|

||||

If you employ YOLO-NAS in your research or development work, please cite SuperGradients:

|

||||

|

||||

!!! Quote ""

|

||||

|

||||

=== "BibTeX"

|

||||

|

||||

```bibtex

|

||||

@misc{supergradients,

|

||||

doi = {10.5281/ZENODO.7789328},

|

||||

url = {https://zenodo.org/record/7789328},

|

||||

author = {Aharon, Shay and {Louis-Dupont} and {Ofri Masad} and Yurkova, Kate and {Lotem Fridman} and {Lkdci} and Khvedchenya, Eugene and Rubin, Ran and Bagrov, Natan and Tymchenko, Borys and Keren, Tomer and Zhilko, Alexander and {Eran-Deci}},

|

||||

title = {Super-Gradients},

|

||||

publisher = {GitHub},

|

||||

journal = {GitHub repository},

|

||||

year = {2021},

|

||||

}

|

||||

```

|

||||

|

||||

We express our gratitude to Deci AI's [SuperGradients](https://github.com/Deci-AI/super-gradients/) team for their efforts in creating and maintaining this valuable resource for the computer vision community. We believe YOLO-NAS, with its innovative architecture and superior object detection capabilities, will become a critical tool for developers and researchers alike.

|

||||

|

||||

_Keywords: YOLO-NAS, Deci AI, object detection, deep learning, neural architecture search, Ultralytics Python API, YOLO model, SuperGradients, pre-trained models, quantization-friendly basic block, advanced training schemes, post-training quantization, AutoNAC optimization, COCO, Objects365, Roboflow 100_

|

||||

216

docs/en/models/yolo-world.md

Normal file

216

docs/en/models/yolo-world.md

Normal file

@ -0,0 +1,216 @@

|

||||

---

|

||||

comments: true

|

||||

description: Discover YOLO-World, a YOLOv8-based framework for real-time open-vocabulary object detection in images. It enhances user interaction, boosts computational efficiency, and adapts across various vision tasks.

|

||||

keywords: YOLO-World, YOLOv8, machine learning, CNN-based framework, object detection, real-time detection, Ultralytics, vision tasks, image processing, industrial applications, user interaction

|

||||

---

|

||||

|

||||

# YOLO-World Model

|

||||

|

||||

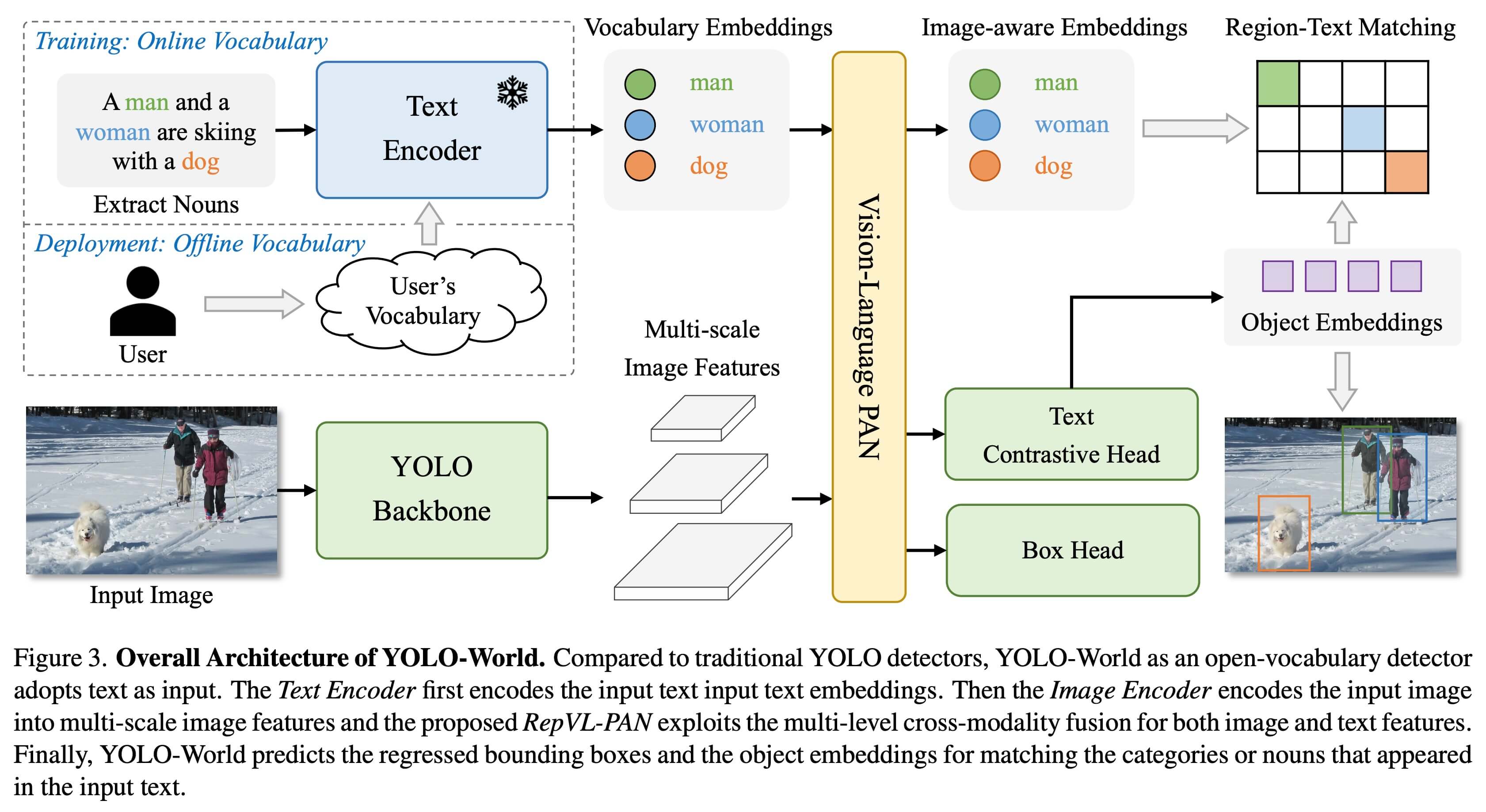

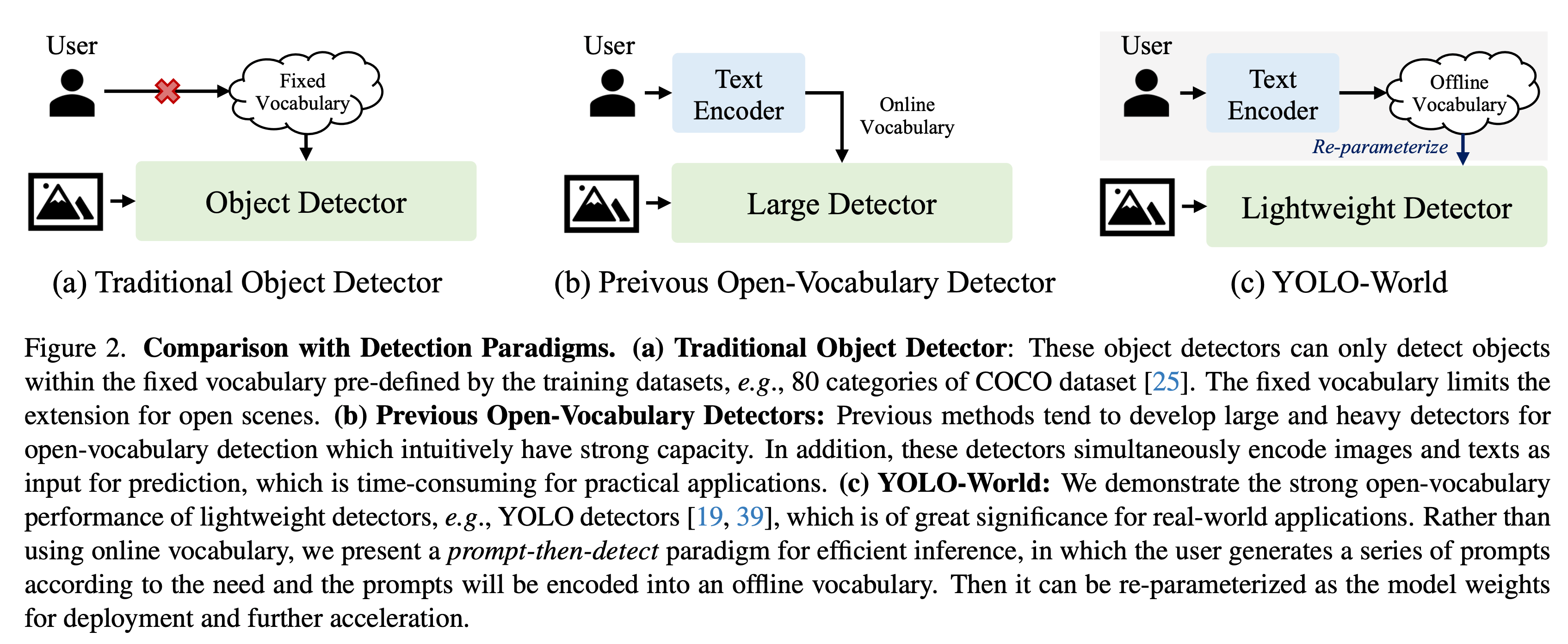

The YOLO-World Model introduces an advanced, real-time [Ultralytics](https://ultralytics.com) [YOLOv8](yolov8.md)-based approach for Open-Vocabulary Detection tasks. This innovation enables the detection of any object within an image based on descriptive texts. By significantly lowering computational demands while preserving competitive performance, YOLO-World emerges as a versatile tool for numerous vision-based applications.

|

||||

|

||||

|

||||

|

||||

## Overview

|

||||

|

||||

YOLO-World tackles the challenges faced by traditional Open-Vocabulary detection models, which often rely on cumbersome Transformer models requiring extensive computational resources. These models' dependence on pre-defined object categories also restricts their utility in dynamic scenarios. YOLO-World revitalizes the YOLOv8 framework with open-vocabulary detection capabilities, employing vision-language modeling and pre-training on expansive datasets to excel at identifying a broad array of objects in zero-shot scenarios with unmatched efficiency.

|

||||

|

||||

## Key Features

|

||||

|

||||

1. **Real-time Solution:** Harnessing the computational speed of CNNs, YOLO-World delivers a swift open-vocabulary detection solution, catering to industries in need of immediate results.

|

||||

|

||||

2. **Efficiency and Performance:** YOLO-World slashes computational and resource requirements without sacrificing performance, offering a robust alternative to models like SAM but at a fraction of the computational cost, enabling real-time applications.

|

||||

|

||||

3. **Inference with Offline Vocabulary:** YOLO-World introduces a "prompt-then-detect" strategy, employing an offline vocabulary to enhance efficiency further. This approach enables the use of custom prompts computed apriori, including captions or categories, to be encoded and stored as offline vocabulary embeddings, streamlining the detection process.

|

||||

|

||||

4. **Powered by YOLOv8:** Built upon [Ultralytics YOLOv8](yolov8.md), YOLO-World leverages the latest advancements in real-time object detection to facilitate open-vocabulary detection with unparalleled accuracy and speed.

|

||||

|

||||

5. **Benchmark Excellence:** YOLO-World outperforms existing open-vocabulary detectors, including MDETR and GLIP series, in terms of speed and efficiency on standard benchmarks, showcasing YOLOv8's superior capability on a single NVIDIA V100 GPU.

|

||||

|

||||

6. **Versatile Applications:** YOLO-World's innovative approach unlocks new possibilities for a multitude of vision tasks, delivering speed improvements by orders of magnitude over existing methods.

|

||||

|

||||

## Available Models, Supported Tasks, and Operating Modes

|

||||

|

||||

This section details the models available with their specific pre-trained weights, the tasks they support, and their compatibility with various operating modes such as [Inference](../modes/predict.md), [Validation](../modes/val.md), [Training](../modes/train.md), and [Export](../modes/export.md), denoted by ✅ for supported modes and ❌ for unsupported modes.

|

||||

|

||||

!!! Note

|

||||

|

||||

All the YOLOv8-World weights have been directly migrated from the official [YOLO-World](https://github.com/AILab-CVC/YOLO-World) repository, highlighting their excellent contributions.

|

||||

|

||||

| Model Type | Pre-trained Weights | Tasks Supported | Inference | Validation | Training | Export |

|

||||

|-----------------|-------------------------------------------------------------------------------------------------------|----------------------------------------|-----------|------------|----------|--------|

|

||||

| YOLOv8s-world | [yolov8s-world.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolov8s-world.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ❌ |

|

||||

| YOLOv8s-worldv2 | [yolov8s-worldv2.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolov8s-worldv2.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ✅ |

|

||||

| YOLOv8m-world | [yolov8m-world.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolov8m-world.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ❌ |

|

||||

| YOLOv8m-worldv2 | [yolov8m-worldv2.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolov8m-worldv2.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ✅ |

|

||||

| YOLOv8l-world | [yolov8l-world.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolov8l-world.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ❌ |

|

||||

| YOLOv8l-worldv2 | [yolov8l-worldv2.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolov8l-worldv2.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ✅ |

|

||||

| YOLOv8x-world | [yolov8x-world.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolov8x-world.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ❌ |

|

||||

| YOLOv8x-worldv2 | [yolov8x-worldv2.pt](https://github.com/ultralytics/assets/releases/download/v8.1.0/yolov8x-worldv2.pt) | [Object Detection](../tasks/detect.md) | ✅ | ✅ | ❌ | ✅ |

|

||||

|

||||

## Zero-shot Transfer on COCO Dataset

|

||||

|

||||

| Model Type | mAP | mAP50 | mAP75 |

|

||||

|-----------------|------|-------|-------|

|

||||

| yolov8s-world | 37.4 | 52.0 | 40.6 |

|

||||

| yolov8s-worldv2 | 37.7 | 52.2 | 41.0 |

|

||||

| yolov8m-world | 42.0 | 57.0 | 45.6 |

|

||||

| yolov8m-worldv2 | 43.0 | 58.4 | 46.8 |

|

||||

| yolov8l-world | 45.7 | 61.3 | 49.8 |

|

||||

| yolov8l-worldv2 | 45.8 | 61.3 | 49.8 |

|

||||

| yolov8x-world | 47.0 | 63.0 | 51.2 |

|

||||

| yolov8x-worldv2 | 47.1 | 62.8 | 51.4 |

|

||||

|

||||

## Usage Examples

|

||||

|

||||

The YOLO-World models are easy to integrate into your Python applications. Ultralytics provides user-friendly Python API and CLI commands to streamline development.

|

||||

|

||||

### Predict Usage

|

||||

|

||||

Object detection is straightforward with the `predict` method, as illustrated below:

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLOWorld

|

||||

|

||||

# Initialize a YOLO-World model

|

||||

model = YOLOWorld('yolov8s-world.pt') # or select yolov8m/l-world.pt for different sizes

|

||||

|

||||

# Execute inference with the YOLOv8s-world model on the specified image

|

||||

results = model.predict('path/to/image.jpg')

|

||||

|

||||

# Show results

|

||||

results[0].show()

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

# Perform object detection using a YOLO-World model

|

||||

yolo predict model=yolov8s-world.pt source=path/to/image.jpg imgsz=640

|

||||

```

|

||||

|

||||

This snippet demonstrates the simplicity of loading a pre-trained model and running a prediction on an image.

|

||||

|

||||

### Val Usage

|

||||

|

||||

Model validation on a dataset is streamlined as follows:

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Create a YOLO-World model

|

||||

model = YOLO('yolov8s-world.pt') # or select yolov8m/l-world.pt for different sizes

|

||||

|

||||

# Conduct model validation on the COCO8 example dataset

|

||||

metrics = model.val(data='coco8.yaml')

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

# Validate a YOLO-World model on the COCO8 dataset with a specified image size

|

||||

yolo val model=yolov8s-world.pt data=coco8.yaml imgsz=640

|

||||

```

|

||||

|

||||

!!! Note

|

||||

|

||||

The YOLO-World models provided by Ultralytics come pre-configured with [COCO dataset](../datasets/detect/coco.md) categories as part of their offline vocabulary, enhancing efficiency for immediate application. This integration allows the YOLOv8-World models to directly recognize and predict the 80 standard categories defined in the COCO dataset without requiring additional setup or customization.

|

||||

|

||||

### Set prompts

|

||||

|

||||

|

||||

|

||||

The YOLO-World framework allows for the dynamic specification of classes through custom prompts, empowering users to tailor the model to their specific needs **without retraining**. This feature is particularly useful for adapting the model to new domains or specific tasks that were not originally part of the training data. By setting custom prompts, users can essentially guide the model's focus towards objects of interest, enhancing the relevance and accuracy of the detection results.

|

||||

|

||||

For instance, if your application only requires detecting 'person' and 'bus' objects, you can specify these classes directly:

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Custom Inference Prompts"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Initialize a YOLO-World model

|

||||

model = YOLO('yolov8s-world.pt') # or choose yolov8m/l-world.pt

|

||||

|

||||

# Define custom classes

|

||||

model.set_classes(["person", "bus"])

|

||||

|

||||

# Execute prediction for specified categories on an image

|

||||

results = model.predict('path/to/image.jpg')

|

||||

|

||||

# Show results

|

||||

results[0].show()

|

||||

```

|

||||

|

||||

You can also save a model after setting custom classes. By doing this you create a version of the YOLO-World model that is specialized for your specific use case. This process embeds your custom class definitions directly into the model file, making the model ready to use with your specified classes without further adjustments. Follow these steps to save and load your custom YOLOv8 model:

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Persisting Models with Custom Vocabulary"

|

||||

|

||||

First load a YOLO-World model, set custom classes for it and save it:

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Initialize a YOLO-World model

|

||||

model = YOLO('yolov8s-world.pt') # or select yolov8m/l-world.pt

|

||||

|

||||

# Define custom classes

|

||||

model.set_classes(["person", "bus"])

|

||||

|

||||

# Save the model with the defined offline vocabulary

|

||||

model.save("custom_yolov8s.pt")

|

||||

```

|

||||

|

||||