mirror of

https://gitee.com/nanjing-yimao-information/ieemoo-ai-gift.git

synced 2025-08-23 23:50:25 +00:00

update

This commit is contained in:

152

docs/en/guides/azureml-quickstart.md

Normal file

152

docs/en/guides/azureml-quickstart.md

Normal file

@ -0,0 +1,152 @@

|

||||

---

|

||||

comments: true

|

||||

description: Step-by-step Quickstart Guide to Running YOLOv8 Object Detection Models on AzureML for Fast Prototyping and Testing

|

||||

keywords: Ultralytics, YOLOv8, Object Detection, Azure Machine Learning, Quickstart Guide, Prototype, Compute Instance, Terminal, Notebook, IPython Kernel, CLI, Python SDK

|

||||

---

|

||||

|

||||

# YOLOv8 🚀 on AzureML

|

||||

|

||||

## What is Azure?

|

||||

|

||||

[Azure](https://azure.microsoft.com/) is Microsoft's cloud computing platform, designed to help organizations move their workloads to the cloud from on-premises data centers. With the full spectrum of cloud services including those for computing, databases, analytics, machine learning, and networking, users can pick and choose from these services to develop and scale new applications, or run existing applications, in the public cloud.

|

||||

|

||||

## What is Azure Machine Learning (AzureML)?

|

||||

|

||||

Azure Machine Learning, commonly referred to as AzureML, is a fully managed cloud service that enables data scientists and developers to efficiently embed predictive analytics into their applications, helping organizations use massive data sets and bring all the benefits of the cloud to machine learning. AzureML offers a variety of services and capabilities aimed at making machine learning accessible, easy to use, and scalable. It provides capabilities like automated machine learning, drag-and-drop model training, as well as a robust Python SDK so that developers can make the most out of their machine learning models.

|

||||

|

||||

## How Does AzureML Benefit YOLO Users?

|

||||

|

||||

For users of YOLO (You Only Look Once), AzureML provides a robust, scalable, and efficient platform to both train and deploy machine learning models. Whether you are looking to run quick prototypes or scale up to handle more extensive data, AzureML's flexible and user-friendly environment offers various tools and services to fit your needs. You can leverage AzureML to:

|

||||

|

||||

- Easily manage large datasets and computational resources for training.

|

||||

- Utilize built-in tools for data preprocessing, feature selection, and model training.

|

||||

- Collaborate more efficiently with capabilities for MLOps (Machine Learning Operations), including but not limited to monitoring, auditing, and versioning of models and data.

|

||||

|

||||

In the subsequent sections, you will find a quickstart guide detailing how to run YOLOv8 object detection models using AzureML, either from a compute terminal or a notebook.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Before you can get started, make sure you have access to an AzureML workspace. If you don't have one, you can create a new [AzureML workspace](https://learn.microsoft.com/azure/machine-learning/concept-workspace?view=azureml-api-2) by following Azure's official documentation. This workspace acts as a centralized place to manage all AzureML resources.

|

||||

|

||||

## Create a compute instance

|

||||

|

||||

From your AzureML workspace, select Compute > Compute instances > New, select the instance with the resources you need.

|

||||

|

||||

<p align="center">

|

||||

<img width="1280" src="https://github.com/ouphi/ultralytics/assets/17216799/3e92fcc0-a08e-41a4-af81-d289cfe3b8f2" alt="Create Azure Compute Instance">

|

||||

</p>

|

||||

|

||||

## Quickstart from Terminal

|

||||

|

||||

Start your compute and open a Terminal:

|

||||

|

||||

<p align="center">

|

||||

<img width="480" src="https://github.com/ouphi/ultralytics/assets/17216799/635152f1-f4a3-4261-b111-d416cb5ef357" alt="Open Terminal">

|

||||

</p>

|

||||

|

||||

### Create virtualenv

|

||||

|

||||

Create your conda virtualenv and install pip in it:

|

||||

|

||||

```bash

|

||||

conda create --name yolov8env -y

|

||||

conda activate yolov8env

|

||||

conda install pip -y

|

||||

```

|

||||

|

||||

Install the required dependencies:

|

||||

|

||||

```bash

|

||||

cd ultralytics

|

||||

pip install -r requirements.txt

|

||||

pip install ultralytics

|

||||

pip install onnx>=1.12.0

|

||||

```

|

||||

|

||||

### Perform YOLOv8 tasks

|

||||

|

||||

Predict:

|

||||

|

||||

```bash

|

||||

yolo predict model=yolov8n.pt source='https://ultralytics.com/images/bus.jpg'

|

||||

```

|

||||

|

||||

Train a detection model for 10 epochs with an initial learning_rate of 0.01:

|

||||

|

||||

```bash

|

||||

yolo train data=coco128.yaml model=yolov8n.pt epochs=10 lr0=0.01

|

||||

```

|

||||

|

||||

You can find more [instructions to use the Ultralytics CLI here](../quickstart.md#use-ultralytics-with-cli).

|

||||

|

||||

## Quickstart from a Notebook

|

||||

|

||||

### Create a new IPython kernel

|

||||

|

||||

Open the compute Terminal.

|

||||

|

||||

<p align="center">

|

||||

<img width="480" src="https://github.com/ouphi/ultralytics/assets/17216799/635152f1-f4a3-4261-b111-d416cb5ef357" alt="Open Terminal">

|

||||

</p>

|

||||

|

||||

From your compute terminal, you need to create a new ipykernel that will be used by your notebook to manage your dependencies:

|

||||

|

||||

```bash

|

||||

conda create --name yolov8env -y

|

||||

conda activate yolov8env

|

||||

conda install pip -y

|

||||

conda install ipykernel -y

|

||||

python -m ipykernel install --user --name yolov8env --display-name "yolov8env"

|

||||

```

|

||||

|

||||

Close your terminal and create a new notebook. From your Notebook, you can select the new kernel.

|

||||

|

||||

Then you can open a Notebook cell and install the required dependencies:

|

||||

|

||||

```bash

|

||||

%%bash

|

||||

source activate yolov8env

|

||||

cd ultralytics

|

||||

pip install -r requirements.txt

|

||||

pip install ultralytics

|

||||

pip install onnx>=1.12.0

|

||||

```

|

||||

|

||||

Note that we need to use the `source activate yolov8env` for all the %%bash cells, to make sure that the %%bash cell uses environment we want.

|

||||

|

||||

Run some predictions using the [Ultralytics CLI](../quickstart.md#use-ultralytics-with-cli):

|

||||

|

||||

```bash

|

||||

%%bash

|

||||

source activate yolov8env

|

||||

yolo predict model=yolov8n.pt source='https://ultralytics.com/images/bus.jpg'

|

||||

```

|

||||

|

||||

Or with the [Ultralytics Python interface](../quickstart.md#use-ultralytics-with-python), for example to train the model:

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Load a model

|

||||

model = YOLO("yolov8n.pt") # load an official YOLOv8n model

|

||||

|

||||

# Use the model

|

||||

model.train(data="coco128.yaml", epochs=3) # train the model

|

||||

metrics = model.val() # evaluate model performance on the validation set

|

||||

results = model("https://ultralytics.com/images/bus.jpg") # predict on an image

|

||||

path = model.export(format="onnx") # export the model to ONNX format

|

||||

```

|

||||

|

||||

You can use either the Ultralytics CLI or Python interface for running YOLOv8 tasks, as described in the terminal section above.

|

||||

|

||||

By following these steps, you should be able to get YOLOv8 running quickly on AzureML for quick trials. For more advanced uses, you may refer to the full AzureML documentation linked at the beginning of this guide.

|

||||

|

||||

## Explore More with AzureML

|

||||

|

||||

This guide serves as an introduction to get you up and running with YOLOv8 on AzureML. However, it only scratches the surface of what AzureML can offer. To delve deeper and unlock the full potential of AzureML for your machine learning projects, consider exploring the following resources:

|

||||

|

||||

- [Create a Data Asset](https://learn.microsoft.com/azure/machine-learning/how-to-create-data-assets): Learn how to set up and manage your data assets effectively within the AzureML environment.

|

||||

- [Initiate an AzureML Job](https://learn.microsoft.com/azure/machine-learning/how-to-train-model): Get a comprehensive understanding of how to kickstart your machine learning training jobs on AzureML.

|

||||

- [Register a Model](https://learn.microsoft.com/azure/machine-learning/how-to-manage-models): Familiarize yourself with model management practices including registration, versioning, and deployment.

|

||||

- [Train YOLOv8 with AzureML Python SDK](https://medium.com/@ouphi/how-to-train-the-yolov8-model-with-azure-machine-learning-python-sdk-8268696be8ba): Explore a step-by-step guide on using the AzureML Python SDK to train your YOLOv8 models.

|

||||

- [Train YOLOv8 with AzureML CLI](https://medium.com/@ouphi/how-to-train-the-yolov8-model-with-azureml-and-the-az-cli-73d3c870ba8e): Discover how to utilize the command-line interface for streamlined training and management of YOLOv8 models on AzureML.

|

||||

132

docs/en/guides/conda-quickstart.md

Normal file

132

docs/en/guides/conda-quickstart.md

Normal file

@ -0,0 +1,132 @@

|

||||

---

|

||||

comments: true

|

||||

description: Comprehensive guide to setting up and using Ultralytics YOLO models in a Conda environment. Learn how to install the package, manage dependencies, and get started with object detection projects.

|

||||

keywords: Ultralytics, YOLO, Conda, environment setup, object detection, package installation, deep learning, machine learning, guide

|

||||

---

|

||||

|

||||

# Conda Quickstart Guide for Ultralytics

|

||||

|

||||

<p align="center">

|

||||

<img width="800" src="https://user-images.githubusercontent.com/26833433/266324397-32119e21-8c86-43e5-a00e-79827d303d10.png" alt="Ultralytics Conda Package Visual">

|

||||

</p>

|

||||

|

||||

This guide provides a comprehensive introduction to setting up a Conda environment for your Ultralytics projects. Conda is an open-source package and environment management system that offers an excellent alternative to pip for installing packages and dependencies. Its isolated environments make it particularly well-suited for data science and machine learning endeavors. For more details, visit the Ultralytics Conda package on [Anaconda](https://anaconda.org/conda-forge/ultralytics) and check out the Ultralytics feedstock repository for package updates on [GitHub](https://github.com/conda-forge/ultralytics-feedstock/).

|

||||

|

||||

[](https://anaconda.org/conda-forge/ultralytics) [](https://anaconda.org/conda-forge/ultralytics) [](https://anaconda.org/conda-forge/ultralytics) [](https://anaconda.org/conda-forge/ultralytics)

|

||||

|

||||

## What You Will Learn

|

||||

|

||||

- Setting up a Conda environment

|

||||

- Installing Ultralytics via Conda

|

||||

- Initializing Ultralytics in your environment

|

||||

- Using Ultralytics Docker images with Conda

|

||||

|

||||

---

|

||||

|

||||

## Prerequisites

|

||||

|

||||

- You should have Anaconda or Miniconda installed on your system. If not, download and install it from [Anaconda](https://www.anaconda.com/) or [Miniconda](https://docs.conda.io/projects/miniconda/en/latest/).

|

||||

|

||||

---

|

||||

|

||||

## Setting up a Conda Environment

|

||||

|

||||

First, let's create a new Conda environment. Open your terminal and run the following command:

|

||||

|

||||

```bash

|

||||

conda create --name ultralytics-env python=3.8 -y

|

||||

```

|

||||

|

||||

Activate the new environment:

|

||||

|

||||

```bash

|

||||

conda activate ultralytics-env

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Installing Ultralytics

|

||||

|

||||

You can install the Ultralytics package from the conda-forge channel. Execute the following command:

|

||||

|

||||

```bash

|

||||

conda install -c conda-forge ultralytics

|

||||

```

|

||||

|

||||

### Note on CUDA Environment

|

||||

|

||||

If you're working in a CUDA-enabled environment, it's a good practice to install `ultralytics`, `pytorch`, and `pytorch-cuda` together to resolve any conflicts:

|

||||

|

||||

```bash

|

||||

conda install -c pytorch -c nvidia -c conda-forge pytorch torchvision pytorch-cuda=11.8 ultralytics

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Using Ultralytics

|

||||

|

||||

With Ultralytics installed, you can now start using its robust features for object detection, instance segmentation, and more. For example, to predict an image, you can run:

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

model = YOLO('yolov8n.pt') # initialize model

|

||||

results = model('path/to/image.jpg') # perform inference

|

||||

results[0].show() # display results for the first image

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Ultralytics Conda Docker Image

|

||||

|

||||

If you prefer using Docker, Ultralytics offers Docker images with a Conda environment included. You can pull these images from [DockerHub](https://hub.docker.com/r/ultralytics/ultralytics).

|

||||

|

||||

Pull the latest Ultralytics image:

|

||||

|

||||

```bash

|

||||

# Set image name as a variable

|

||||

t=ultralytics/ultralytics:latest-conda

|

||||

|

||||

# Pull the latest Ultralytics image from Docker Hub

|

||||

sudo docker pull $t

|

||||

```

|

||||

|

||||

Run the image:

|

||||

|

||||

```bash

|

||||

# Run the Ultralytics image in a container with GPU support

|

||||

sudo docker run -it --ipc=host --gpus all $t # all GPUs

|

||||

sudo docker run -it --ipc=host --gpus '"device=2,3"' $t # specify GPUs

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

Certainly, you can include the following section in your Conda guide to inform users about speeding up installation using `libmamba`:

|

||||

|

||||

---

|

||||

|

||||

## Speeding Up Installation with Libmamba

|

||||

|

||||

If you're looking to [speed up the package installation](https://www.anaconda.com/blog/a-faster-conda-for-a-growing-community) process in Conda, you can opt to use `libmamba`, a fast, cross-platform, and dependency-aware package manager that serves as an alternative solver to Conda's default.

|

||||

|

||||

### How to Enable Libmamba

|

||||

|

||||

To enable `libmamba` as the solver for Conda, you can perform the following steps:

|

||||

|

||||

1. First, install the `conda-libmamba-solver` package. This can be skipped if your Conda version is 4.11 or above, as `libmamba` is included by default.

|

||||

|

||||

```bash

|

||||

conda install conda-libmamba-solver

|

||||

```

|

||||

|

||||

2. Next, configure Conda to use `libmamba` as the solver:

|

||||

|

||||

```bash

|

||||

conda config --set solver libmamba

|

||||

```

|

||||

|

||||

And that's it! Your Conda installation will now use `libmamba` as the solver, which should result in a faster package installation process.

|

||||

|

||||

---

|

||||

|

||||

Congratulations! You have successfully set up a Conda environment, installed the Ultralytics package, and are now ready to explore its rich functionalities. Feel free to dive deeper into the [Ultralytics documentation](../index.md) for more advanced tutorials and examples.

|

||||

140

docs/en/guides/coral-edge-tpu-on-raspberry-pi.md

Normal file

140

docs/en/guides/coral-edge-tpu-on-raspberry-pi.md

Normal file

@ -0,0 +1,140 @@

|

||||

---

|

||||

comments: true

|

||||

description: Guide on how to use Ultralytics with a Coral Edge TPU on a Raspberry Pi for increased inference performance.

|

||||

keywords: Ultralytics, YOLOv8, Object Detection, Coral, Edge TPU, Raspberry Pi, embedded, edge compute, sbc, accelerator, mobile

|

||||

---

|

||||

|

||||

# Coral Edge TPU on a Raspberry Pi with Ultralytics YOLOv8 🚀

|

||||

|

||||

<p align="center">

|

||||

<img width="800" src="https://images.ctfassets.net/2lpsze4g694w/5XK2dV0w55U0TefijPli1H/bf0d119d77faef9a5d2cc0dad2aa4b42/Edge-TPU-USB-Accelerator-and-Pi.jpg?w=800" alt="Raspberry Pi single board computer with USB Edge TPU accelerator">

|

||||

</p>

|

||||

|

||||

## What is a Coral Edge TPU?

|

||||

|

||||

The Coral Edge TPU is a compact device that adds an Edge TPU coprocessor to your system. It enables low-power, high-performance ML inference for TensorFlow Lite models. Read more at the [Coral Edge TPU home page](https://coral.ai/products/accelerator).

|

||||

|

||||

## Boost Raspberry Pi Model Performance with Coral Edge TPU

|

||||

|

||||

Many people want to run their models on an embedded or mobile device such as a Raspberry Pi, since they are very power efficient and can be used in many different applications. However, the inference performance on these devices is usually poor even when using formats like [onnx](../integrations/onnx.md) or [openvino](../integrations/openvino.md). The Coral Edge TPU is a great solution to this problem, since it can be used with a Raspberry Pi and accelerate inference performance greatly.

|

||||

|

||||

## Edge TPU on Raspberry Pi with TensorFlow Lite (New)⭐

|

||||

|

||||

The [existing guide](https://coral.ai/docs/accelerator/get-started/) by Coral on how to use the Edge TPU with a Raspberry Pi is outdated, and the current Coral Edge TPU runtime builds do not work with the current TensorFlow Lite runtime versions anymore. In addition to that, Google seems to have completely abandoned the Coral project, and there have not been any updates between 2021 and 2024. This guide will show you how to get the Edge TPU working with the latest versions of the TensorFlow Lite runtime and an updated Coral Edge TPU runtime on a Raspberry Pi single board computer (SBC).

|

||||

|

||||

## Prerequisites

|

||||

|

||||

- [Raspberry Pi 4B](https://www.raspberrypi.com/products/raspberry-pi-4-model-b/) (2GB or more recommended) or [Raspberry Pi 5](https://www.raspberrypi.com/products/raspberry-pi-5/) (Recommended)

|

||||

- [Raspberry Pi OS](https://www.raspberrypi.com/software/) Bullseye/Bookworm (64-bit) with desktop (Recommended)

|

||||

- [Coral USB Accelerator](https://coral.ai/products/accelerator/)

|

||||

- A non-ARM based platform for exporting an Ultralytics PyTorch model

|

||||

|

||||

## Installation Walkthrough

|

||||

|

||||

This guide assumes that you already have a working Raspberry Pi OS install and have installed `ultralytics` and all dependencies. To get `ultralytics` installed, visit the [quickstart guide](../quickstart.md) to get setup before continuing here.

|

||||

|

||||

### Installing the Edge TPU runtime

|

||||

|

||||

First, we need to install the Edge TPU runtime. There are many different versions available, so you need to choose the right version for your operating system.

|

||||

|

||||

| Raspberry Pi OS | High frequency mode | Version to download |

|

||||

|-----------------|:-------------------:|--------------------------------------------|

|

||||

| Bullseye 32bit | No | `libedgetpu1-std_ ... .bullseye_armhf.deb` |

|

||||

| Bullseye 64bit | No | `libedgetpu1-std_ ... .bullseye_arm64.deb` |

|

||||

| Bullseye 32bit | Yes | `libedgetpu1-max_ ... .bullseye_armhf.deb` |

|

||||

| Bullseye 64bit | Yes | `libedgetpu1-max_ ... .bullseye_arm64.deb` |

|

||||

| Bookworm 32bit | No | `libedgetpu1-std_ ... .bookworm_armhf.deb` |

|

||||

| Bookworm 64bit | No | `libedgetpu1-std_ ... .bookworm_arm64.deb` |

|

||||

| Bookworm 32bit | Yes | `libedgetpu1-max_ ... .bookworm_armhf.deb` |

|

||||

| Bookworm 64bit | Yes | `libedgetpu1-max_ ... .bookworm_arm64.deb` |

|

||||

|

||||

[Download the latest version from here](https://github.com/feranick/libedgetpu/releases).

|

||||

|

||||

After downloading the file, you can install it with the following command:

|

||||

|

||||

```bash

|

||||

sudo dpkg -i path/to/package.deb

|

||||

```

|

||||

|

||||

After installing the runtime, you need to plug in your Coral Edge TPU into a USB 3.0 port on your Raspberry Pi. This is because, according to the official guide, a new `udev` rule needs to take effect after installation.

|

||||

|

||||

???+ warning "Important"

|

||||

|

||||

If you already have the Coral Edge TPU runtime installed, uninstall it using the following command.

|

||||

|

||||

```bash

|

||||

# If you installed the standard version

|

||||

sudo apt remove libedgetpu1-std

|

||||

|

||||

# If you installed the high frequency version

|

||||

sudo apt remove libedgetpu1-max

|

||||

```

|

||||

|

||||

## Export your model to a Edge TPU compatible model

|

||||

|

||||

To use the Edge TPU, you need to convert your model into a compatible format. It is recommended that you run export on Google Colab, x86_64 Linux machine, using the official [Ultralytics Docker container](docker-quickstart.md), or using [Ultralytics HUB](../hub/quickstart.md), since the Edge TPU compiler is not available on ARM. See the [Export Mode](../modes/export.md) for the available arguments.

|

||||

|

||||

!!! Exporting the model

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Load a model

|

||||

model = YOLO('path/to/model.pt') # Load a official model or custom model

|

||||

|

||||

# Export the model

|

||||

model.export(format='edgetpu')

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

yolo export model=path/to/model.pt format=edgetpu # Export a official model or custom model

|

||||

```

|

||||

|

||||

The exported model will be saved in the `<model_name>_saved_model/` folder with the name `<model_name>_full_integer_quant_edgetpu.tflite`.

|

||||

|

||||

## Running the model

|

||||

|

||||

After exporting your model, you can run inference with it using the following code:

|

||||

|

||||

!!! Running the model

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Load a model

|

||||

model = YOLO('path/to/edgetpu_model.tflite') # Load a official model or custom model

|

||||

|

||||

# Run Prediction

|

||||

model.predict("path/to/source.png")

|

||||

```

|

||||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

yolo predict model=path/to/edgetpu_model.tflite source=path/to/source.png # Load a official model or custom model

|

||||

```

|

||||

|

||||

Find comprehensive information on the [Predict](../modes/predict.md) page for full prediction mode details.

|

||||

|

||||

???+ warning "Important"

|

||||

|

||||

You should run the model using `tflite-runtime` and not `tensorflow`.

|

||||

If `tensorflow` is installed, uninstall tensorflow with the following command:

|

||||

|

||||

```bash

|

||||

pip uninstall tensorflow tensorflow-aarch64

|

||||

```

|

||||

|

||||

Then install/update `tflite-runtime`:

|

||||

|

||||

```

|

||||

pip install -U tflite-runtime

|

||||

```

|

||||

|

||||

If you want a `tflite-runtime` wheel for `tensorflow` 2.15.0 download it from [here](https://github.com/feranick/TFlite-builds/releases) and install it using `pip` or your package manager of choice.

|

||||

107

docs/en/guides/distance-calculation.md

Normal file

107

docs/en/guides/distance-calculation.md

Normal file

@ -0,0 +1,107 @@

|

||||

---

|

||||

comments: true

|

||||

description: Distance Calculation Using Ultralytics YOLOv8

|

||||

keywords: Ultralytics, YOLOv8, Object Detection, Distance Calculation, Object Tracking, Notebook, IPython Kernel, CLI, Python SDK

|

||||

---

|

||||

|

||||

# Distance Calculation using Ultralytics YOLOv8 🚀

|

||||

|

||||

## What is Distance Calculation?

|

||||

|

||||

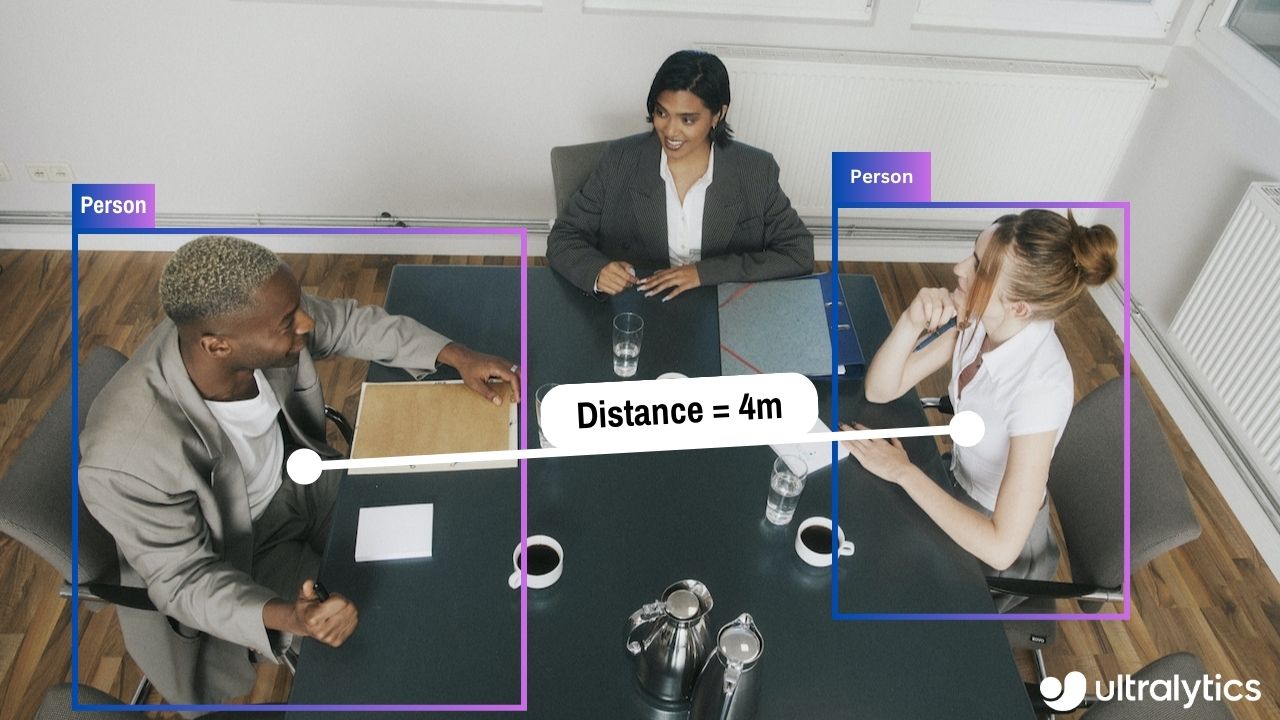

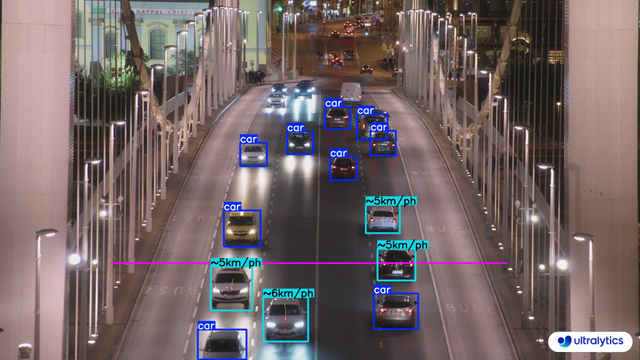

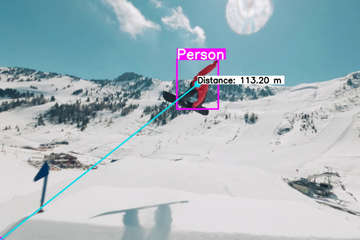

Measuring the gap between two objects is known as distance calculation within a specified space. In the case of [Ultralytics YOLOv8](https://github.com/ultralytics/ultralytics), the bounding box centroid is employed to calculate the distance for bounding boxes highlighted by the user.

|

||||

|

||||

<p align="center">

|

||||

<br>

|

||||

<iframe loading="lazy" width="720" height="405" src="https://www.youtube.com/embed/LE8am1QoVn4"

|

||||

title="YouTube video player" frameborder="0"

|

||||

allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share"

|

||||

allowfullscreen>

|

||||

</iframe>

|

||||

<br>

|

||||

<strong>Watch:</strong> Distance Calculation using Ultralytics YOLOv8

|

||||

</p>

|

||||

|

||||

## Visuals

|

||||

|

||||

| Distance Calculation using Ultralytics YOLOv8 |

|

||||

|:-----------------------------------------------------------------------------------------------------------------------------------------------:|

|

||||

|  |

|

||||

|

||||

## Advantages of Distance Calculation?

|

||||

|

||||

- **Localization Precision:** Enhances accurate spatial positioning in computer vision tasks.

|

||||

- **Size Estimation:** Allows estimation of physical sizes for better contextual understanding.

|

||||

- **Scene Understanding:** Contributes to a 3D understanding of the environment for improved decision-making.

|

||||

|

||||

???+ tip "Distance Calculation"

|

||||

|

||||

- Click on any two bounding boxes with Left Mouse click for distance calculation

|

||||

|

||||

!!! Example "Distance Calculation using YOLOv8 Example"

|

||||

|

||||

=== "Video Stream"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

from ultralytics.solutions import distance_calculation

|

||||

import cv2

|

||||

|

||||

model = YOLO("yolov8n.pt")

|

||||

names = model.model.names

|

||||

|

||||

cap = cv2.VideoCapture("path/to/video/file.mp4")

|

||||

assert cap.isOpened(), "Error reading video file"

|

||||

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

|

||||

|

||||

# Video writer

|

||||

video_writer = cv2.VideoWriter("distance_calculation.avi",

|

||||

cv2.VideoWriter_fourcc(*'mp4v'),

|

||||

fps,

|

||||

(w, h))

|

||||

|

||||

# Init distance-calculation obj

|

||||

dist_obj = distance_calculation.DistanceCalculation()

|

||||

dist_obj.set_args(names=names, view_img=True)

|

||||

|

||||

while cap.isOpened():

|

||||

success, im0 = cap.read()

|

||||

if not success:

|

||||

print("Video frame is empty or video processing has been successfully completed.")

|

||||

break

|

||||

|

||||

tracks = model.track(im0, persist=True, show=False)

|

||||

im0 = dist_obj.start_process(im0, tracks)

|

||||

video_writer.write(im0)

|

||||

|

||||

cap.release()

|

||||

video_writer.release()

|

||||

cv2.destroyAllWindows()

|

||||

|

||||

```

|

||||

|

||||

???+ tip "Note"

|

||||

|

||||

- Mouse Right Click will delete all drawn points

|

||||

- Mouse Left Click can be used to draw points

|

||||

|

||||

### Optional Arguments `set_args`

|

||||

|

||||

| Name | Type | Default | Description |

|

||||

|------------------|--------|-----------------|--------------------------------------------------------|

|

||||

| `names` | `dict` | `None` | Classes names |

|

||||

| `view_img` | `bool` | `False` | Display frames with counts |

|

||||

| `line_thickness` | `int` | `2` | Increase bounding boxes thickness |

|

||||

| `line_color` | `RGB` | `(255, 255, 0)` | Line Color for centroids mapping on two bounding boxes |

|

||||

| `centroid_color` | `RGB` | `(255, 0, 255)` | Centroid color for each bounding box |

|

||||

|

||||

### Arguments `model.track`

|

||||

|

||||

| Name | Type | Default | Description |

|

||||

|-----------|---------|----------------|-------------------------------------------------------------|

|

||||

| `source` | `im0` | `None` | source directory for images or videos |

|

||||

| `persist` | `bool` | `False` | persisting tracks between frames |

|

||||

| `tracker` | `str` | `botsort.yaml` | Tracking method 'bytetrack' or 'botsort' |

|

||||

| `conf` | `float` | `0.3` | Confidence Threshold |

|

||||

| `iou` | `float` | `0.5` | IOU Threshold |

|

||||

| `classes` | `list` | `None` | filter results by class, i.e. classes=0, or classes=[0,2,3] |

|

||||

| `verbose` | `bool` | `True` | Display the object tracking results |

|

||||

119

docs/en/guides/docker-quickstart.md

Normal file

119

docs/en/guides/docker-quickstart.md

Normal file

@ -0,0 +1,119 @@

|

||||

---

|

||||

comments: true

|

||||

description: Complete guide to setting up and using Ultralytics YOLO models with Docker. Learn how to install Docker, manage GPU support, and run YOLO models in isolated containers.

|

||||

keywords: Ultralytics, YOLO, Docker, GPU, containerization, object detection, package installation, deep learning, machine learning, guide

|

||||

---

|

||||

|

||||

# Docker Quickstart Guide for Ultralytics

|

||||

|

||||

<p align="center">

|

||||

<img width="800" src="https://user-images.githubusercontent.com/26833433/270173601-fc7011bd-e67c-452f-a31a-aa047dcd2771.png" alt="Ultralytics Docker Package Visual">

|

||||

</p>

|

||||

|

||||

This guide serves as a comprehensive introduction to setting up a Docker environment for your Ultralytics projects. [Docker](https://docker.com/) is a platform for developing, shipping, and running applications in containers. It is particularly beneficial for ensuring that the software will always run the same, regardless of where it's deployed. For more details, visit the Ultralytics Docker repository on [Docker Hub](https://hub.docker.com/r/ultralytics/ultralytics).

|

||||

|

||||

[](https://hub.docker.com/r/ultralytics/ultralytics)

|

||||

|

||||

## What You Will Learn

|

||||

|

||||

- Setting up Docker with NVIDIA support

|

||||

- Installing Ultralytics Docker images

|

||||

- Running Ultralytics in a Docker container

|

||||

- Mounting local directories into the container

|

||||

|

||||

---

|

||||

|

||||

## Prerequisites

|

||||

|

||||

- Make sure Docker is installed on your system. If not, you can download and install it from [Docker's website](https://www.docker.com/products/docker-desktop).

|

||||

- Ensure that your system has an NVIDIA GPU and NVIDIA drivers are installed.

|

||||

|

||||

---

|

||||

|

||||

## Setting up Docker with NVIDIA Support

|

||||

|

||||

First, verify that the NVIDIA drivers are properly installed by running:

|

||||

|

||||

```bash

|

||||

nvidia-smi

|

||||

```

|

||||

|

||||

### Installing NVIDIA Docker Runtime

|

||||

|

||||

Now, let's install the NVIDIA Docker runtime to enable GPU support in Docker containers:

|

||||

|

||||

```bash

|

||||

# Add NVIDIA package repositories

|

||||

curl -s -L https://nvidia.github.io/nvidia-docker/gpgkey | sudo apt-key add -

|

||||

distribution=$(lsb_release -cs)

|

||||

curl -s -L https://nvidia.github.io/nvidia-docker/$distribution/nvidia-docker.list | sudo tee /etc/apt/sources.list.d/nvidia-docker.list

|

||||

|

||||

# Install NVIDIA Docker runtime

|

||||

sudo apt-get update

|

||||

sudo apt-get install -y nvidia-docker2

|

||||

|

||||

# Restart Docker service to apply changes

|

||||

sudo systemctl restart docker

|

||||

```

|

||||

|

||||

### Verify NVIDIA Runtime with Docker

|

||||

|

||||

Run `docker info | grep -i runtime` to ensure that `nvidia` appears in the list of runtimes:

|

||||

|

||||

```bash

|

||||

docker info | grep -i runtime

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Installing Ultralytics Docker Images

|

||||

|

||||

Ultralytics offers several Docker images optimized for various platforms and use-cases:

|

||||

|

||||

- **Dockerfile:** GPU image, ideal for training.

|

||||

- **Dockerfile-arm64:** For ARM64 architecture, suitable for devices like [Raspberry Pi](raspberry-pi.md).

|

||||

- **Dockerfile-cpu:** CPU-only version for inference and non-GPU environments.

|

||||

- **Dockerfile-jetson:** Optimized for NVIDIA Jetson devices.

|

||||

- **Dockerfile-python:** Minimal Python environment for lightweight applications.

|

||||

- **Dockerfile-conda:** Includes [Miniconda3](https://docs.conda.io/projects/miniconda/en/latest/) and Ultralytics package installed via Conda.

|

||||

|

||||

To pull the latest image:

|

||||

|

||||

```bash

|

||||

# Set image name as a variable

|

||||

t=ultralytics/ultralytics:latest

|

||||

|

||||

# Pull the latest Ultralytics image from Docker Hub

|

||||

sudo docker pull $t

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Running Ultralytics in Docker Container

|

||||

|

||||

Here's how to execute the Ultralytics Docker container:

|

||||

|

||||

```bash

|

||||

# Run with all GPUs

|

||||

sudo docker run -it --ipc=host --gpus all $t

|

||||

|

||||

# Run specifying which GPUs to use

|

||||

sudo docker run -it --ipc=host --gpus '"device=2,3"' $t

|

||||

```

|

||||

|

||||

The `-it` flag assigns a pseudo-TTY and keeps stdin open, allowing you to interact with the container. The `--ipc=host` flag enables sharing of host's IPC namespace, essential for sharing memory between processes. The `--gpus` flag allows the container to access the host's GPUs.

|

||||

|

||||

### Note on File Accessibility

|

||||

|

||||

To work with files on your local machine within the container, you can use Docker volumes:

|

||||

|

||||

```bash

|

||||

# Mount a local directory into the container

|

||||

sudo docker run -it --ipc=host --gpus all -v /path/on/host:/path/in/container $t

|

||||

```

|

||||

|

||||

Replace `/path/on/host` with the directory path on your local machine and `/path/in/container` with the desired path inside the Docker container.

|

||||

|

||||

---

|

||||

|

||||

Congratulations! You're now set up to use Ultralytics with Docker and ready to take advantage of its powerful capabilities. For alternate installation methods, feel free to explore the [Ultralytics quickstart documentation](../quickstart.md).

|

||||

301

docs/en/guides/heatmaps.md

Normal file

301

docs/en/guides/heatmaps.md

Normal file

@ -0,0 +1,301 @@

|

||||

---

|

||||

comments: true

|

||||

description: Advanced Data Visualization with Ultralytics YOLOv8 Heatmaps

|

||||

keywords: Ultralytics, YOLOv8, Advanced Data Visualization, Heatmap Technology, Object Detection and Tracking, Jupyter Notebook, Python SDK, Command Line Interface

|

||||

---

|

||||

|

||||

# Advanced Data Visualization: Heatmaps using Ultralytics YOLOv8 🚀

|

||||

|

||||

## Introduction to Heatmaps

|

||||

|

||||

A heatmap generated with [Ultralytics YOLOv8](https://github.com/ultralytics/ultralytics/) transforms complex data into a vibrant, color-coded matrix. This visual tool employs a spectrum of colors to represent varying data values, where warmer hues indicate higher intensities and cooler tones signify lower values. Heatmaps excel in visualizing intricate data patterns, correlations, and anomalies, offering an accessible and engaging approach to data interpretation across diverse domains.

|

||||

|

||||

<p align="center">

|

||||

<br>

|

||||

<iframe loading="lazy" width="720" height="405" src="https://www.youtube.com/embed/4ezde5-nZZw"

|

||||

title="YouTube video player" frameborder="0"

|

||||

allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share"

|

||||

allowfullscreen>

|

||||

</iframe>

|

||||

<br>

|

||||

<strong>Watch:</strong> Heatmaps using Ultralytics YOLOv8

|

||||

</p>

|

||||

|

||||

## Why Choose Heatmaps for Data Analysis?

|

||||

|

||||

- **Intuitive Data Distribution Visualization:** Heatmaps simplify the comprehension of data concentration and distribution, converting complex datasets into easy-to-understand visual formats.

|

||||

- **Efficient Pattern Detection:** By visualizing data in heatmap format, it becomes easier to spot trends, clusters, and outliers, facilitating quicker analysis and insights.

|

||||

- **Enhanced Spatial Analysis and Decision-Making:** Heatmaps are instrumental in illustrating spatial relationships, aiding in decision-making processes in sectors such as business intelligence, environmental studies, and urban planning.

|

||||

|

||||

## Real World Applications

|

||||

|

||||

| Transportation | Retail |

|

||||

|:-----------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------:|

|

||||

|  |  |

|

||||

| Ultralytics YOLOv8 Transportation Heatmap | Ultralytics YOLOv8 Retail Heatmap |

|

||||

|

||||

!!! tip "Heatmap Configuration"

|

||||

|

||||

- `heatmap_alpha`: Ensure this value is within the range (0.0 - 1.0).

|

||||

- `decay_factor`: Used for removing heatmap after an object is no longer in the frame, its value should also be in the range (0.0 - 1.0).

|

||||

|

||||

!!! Example "Heatmaps using Ultralytics YOLOv8 Example"

|

||||

|

||||

=== "Heatmap"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

from ultralytics.solutions import heatmap

|

||||

import cv2

|

||||

|

||||

model = YOLO("yolov8n.pt")

|

||||

cap = cv2.VideoCapture("path/to/video/file.mp4")

|

||||

assert cap.isOpened(), "Error reading video file"

|

||||

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

|

||||

|

||||

# Video writer

|

||||

video_writer = cv2.VideoWriter("heatmap_output.avi",

|

||||

cv2.VideoWriter_fourcc(*'mp4v'),

|

||||

fps,

|

||||

(w, h))

|

||||

|

||||

# Init heatmap

|

||||

heatmap_obj = heatmap.Heatmap()

|

||||

heatmap_obj.set_args(colormap=cv2.COLORMAP_PARULA,

|

||||

imw=w,

|

||||

imh=h,

|

||||

view_img=True,

|

||||

shape="circle")

|

||||

|

||||

while cap.isOpened():

|

||||

success, im0 = cap.read()

|

||||

if not success:

|

||||

print("Video frame is empty or video processing has been successfully completed.")

|

||||

break

|

||||

tracks = model.track(im0, persist=True, show=False)

|

||||

|

||||

im0 = heatmap_obj.generate_heatmap(im0, tracks)

|

||||

video_writer.write(im0)

|

||||

|

||||

cap.release()

|

||||

video_writer.release()

|

||||

cv2.destroyAllWindows()

|

||||

|

||||

```

|

||||

|

||||

=== "Line Counting"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

from ultralytics.solutions import heatmap

|

||||

import cv2

|

||||

|

||||

model = YOLO("yolov8n.pt")

|

||||

cap = cv2.VideoCapture("path/to/video/file.mp4")

|

||||

assert cap.isOpened(), "Error reading video file"

|

||||

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

|

||||

|

||||

# Video writer

|

||||

video_writer = cv2.VideoWriter("heatmap_output.avi",

|

||||

cv2.VideoWriter_fourcc(*'mp4v'),

|

||||

fps,

|

||||

(w, h))

|

||||

|

||||

line_points = [(20, 400), (1080, 404)] # line for object counting

|

||||

|

||||

# Init heatmap

|

||||

heatmap_obj = heatmap.Heatmap()

|

||||

heatmap_obj.set_args(colormap=cv2.COLORMAP_PARULA,

|

||||

imw=w,

|

||||

imh=h,

|

||||

view_img=True,

|

||||

shape="circle",

|

||||

count_reg_pts=line_points)

|

||||

|

||||

while cap.isOpened():

|

||||

success, im0 = cap.read()

|

||||

if not success:

|

||||

print("Video frame is empty or video processing has been successfully completed.")

|

||||

break

|

||||

tracks = model.track(im0, persist=True, show=False)

|

||||

|

||||

im0 = heatmap_obj.generate_heatmap(im0, tracks)

|

||||

video_writer.write(im0)

|

||||

|

||||

cap.release()

|

||||

video_writer.release()

|

||||

cv2.destroyAllWindows()

|

||||

```

|

||||

|

||||

=== "Region Counting"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

from ultralytics.solutions import heatmap

|

||||

import cv2

|

||||

|

||||

model = YOLO("yolov8n.pt")

|

||||

cap = cv2.VideoCapture("path/to/video/file.mp4")

|

||||

assert cap.isOpened(), "Error reading video file"

|

||||

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

|

||||

|

||||

# Video writer

|

||||

video_writer = cv2.VideoWriter("heatmap_output.avi",

|

||||

cv2.VideoWriter_fourcc(*'mp4v'),

|

||||

fps,

|

||||

(w, h))

|

||||

|

||||

# Define region points

|

||||

region_points = [(20, 400), (1080, 404), (1080, 360), (20, 360)]

|

||||

|

||||

# Init heatmap

|

||||

heatmap_obj = heatmap.Heatmap()

|

||||

heatmap_obj.set_args(colormap=cv2.COLORMAP_PARULA,

|

||||

imw=w,

|

||||

imh=h,

|

||||

view_img=True,

|

||||

shape="circle",

|

||||

count_reg_pts=region_points)

|

||||

|

||||

while cap.isOpened():

|

||||

success, im0 = cap.read()

|

||||

if not success:

|

||||

print("Video frame is empty or video processing has been successfully completed.")

|

||||

break

|

||||

tracks = model.track(im0, persist=True, show=False)

|

||||

|

||||

im0 = heatmap_obj.generate_heatmap(im0, tracks)

|

||||

video_writer.write(im0)

|

||||

|

||||

cap.release()

|

||||

video_writer.release()

|

||||

cv2.destroyAllWindows()

|

||||

```

|

||||

|

||||

=== "Im0"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

from ultralytics.solutions import heatmap

|

||||

import cv2

|

||||

|

||||

model = YOLO("yolov8s.pt") # YOLOv8 custom/pretrained model

|

||||

|

||||

im0 = cv2.imread("path/to/image.png") # path to image file

|

||||

h, w = im0.shape[:2] # image height and width

|

||||

|

||||

# Heatmap Init

|

||||

heatmap_obj = heatmap.Heatmap()

|

||||

heatmap_obj.set_args(colormap=cv2.COLORMAP_PARULA,

|

||||

imw=w,

|

||||

imh=h,

|

||||

view_img=True,

|

||||

shape="circle")

|

||||

|

||||

results = model.track(im0, persist=True)

|

||||

im0 = heatmap_obj.generate_heatmap(im0, tracks=results)

|

||||

cv2.imwrite("ultralytics_output.png", im0)

|

||||

```

|

||||

|

||||

=== "Specific Classes"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

from ultralytics.solutions import heatmap

|

||||

import cv2

|

||||

|

||||

model = YOLO("yolov8n.pt")

|

||||

cap = cv2.VideoCapture("path/to/video/file.mp4")

|

||||

assert cap.isOpened(), "Error reading video file"

|

||||

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

|

||||

|

||||

# Video writer

|

||||

video_writer = cv2.VideoWriter("heatmap_output.avi",

|

||||

cv2.VideoWriter_fourcc(*'mp4v'),

|

||||

fps,

|

||||

(w, h))

|

||||

|

||||

classes_for_heatmap = [0, 2] # classes for heatmap

|

||||

|

||||

# Init heatmap

|

||||

heatmap_obj = heatmap.Heatmap()

|

||||

heatmap_obj.set_args(colormap=cv2.COLORMAP_PARULA,

|

||||

imw=w,

|

||||

imh=h,

|

||||

view_img=True,

|

||||

shape="circle")

|

||||

|

||||

while cap.isOpened():

|

||||

success, im0 = cap.read()

|

||||

if not success:

|

||||

print("Video frame is empty or video processing has been successfully completed.")

|

||||

break

|

||||

tracks = model.track(im0, persist=True, show=False,

|

||||

classes=classes_for_heatmap)

|

||||

|

||||

im0 = heatmap_obj.generate_heatmap(im0, tracks)

|

||||

video_writer.write(im0)

|

||||

|

||||

cap.release()

|

||||

video_writer.release()

|

||||

cv2.destroyAllWindows()

|

||||

```

|

||||

|

||||

### Arguments `set_args`

|

||||

|

||||

| Name | Type | Default | Description |

|

||||

|-----------------------|----------------|-------------------|-----------------------------------------------------------|

|

||||

| `view_img` | `bool` | `False` | Display the frame with heatmap |

|

||||

| `colormap` | `cv2.COLORMAP` | `None` | cv2.COLORMAP for heatmap |

|

||||

| `imw` | `int` | `None` | Width of Heatmap |

|

||||

| `imh` | `int` | `None` | Height of Heatmap |

|

||||

| `heatmap_alpha` | `float` | `0.5` | Heatmap alpha value |

|

||||

| `count_reg_pts` | `list` | `None` | Object counting region points |

|

||||

| `count_txt_thickness` | `int` | `2` | Count values text size |

|

||||

| `count_txt_color` | `RGB Color` | `(0, 0, 0)` | Foreground color for Object counts text |

|

||||

| `count_color` | `RGB Color` | `(255, 255, 255)` | Background color for Object counts text |

|

||||

| `count_reg_color` | `RGB Color` | `(255, 0, 255)` | Counting region color |

|

||||

| `region_thickness` | `int` | `5` | Counting region thickness value |

|

||||

| `decay_factor` | `float` | `0.99` | Decay factor for heatmap area removal after specific time |

|

||||

| `shape` | `str` | `circle` | Heatmap shape for display "rect" or "circle" supported |

|

||||

| `line_dist_thresh` | `int` | `15` | Euclidean Distance threshold for line counter |

|

||||

|

||||

### Arguments `model.track`

|

||||

|

||||

| Name | Type | Default | Description |

|

||||

|-----------|---------|----------------|-------------------------------------------------------------|

|

||||

| `source` | `im0` | `None` | source directory for images or videos |

|

||||

| `persist` | `bool` | `False` | persisting tracks between frames |

|

||||

| `tracker` | `str` | `botsort.yaml` | Tracking method 'bytetrack' or 'botsort' |

|

||||

| `conf` | `float` | `0.3` | Confidence Threshold |

|

||||

| `iou` | `float` | `0.5` | IOU Threshold |

|

||||

| `classes` | `list` | `None` | filter results by class, i.e. classes=0, or classes=[0,2,3] |

|

||||

|

||||

### Heatmap COLORMAPs

|

||||

|

||||

| Colormap Name | Description |

|

||||

|---------------------------------|----------------------------------------|

|

||||

| `cv::COLORMAP_AUTUMN` | Autumn color map |

|

||||

| `cv::COLORMAP_BONE` | Bone color map |

|

||||

| `cv::COLORMAP_JET` | Jet color map |

|

||||

| `cv::COLORMAP_WINTER` | Winter color map |

|

||||

| `cv::COLORMAP_RAINBOW` | Rainbow color map |

|

||||

| `cv::COLORMAP_OCEAN` | Ocean color map |

|

||||

| `cv::COLORMAP_SUMMER` | Summer color map |

|

||||

| `cv::COLORMAP_SPRING` | Spring color map |

|

||||

| `cv::COLORMAP_COOL` | Cool color map |

|

||||

| `cv::COLORMAP_HSV` | HSV (Hue, Saturation, Value) color map |

|

||||

| `cv::COLORMAP_PINK` | Pink color map |

|

||||

| `cv::COLORMAP_HOT` | Hot color map |

|

||||

| `cv::COLORMAP_PARULA` | Parula color map |

|

||||

| `cv::COLORMAP_MAGMA` | Magma color map |

|

||||

| `cv::COLORMAP_INFERNO` | Inferno color map |

|

||||

| `cv::COLORMAP_PLASMA` | Plasma color map |

|

||||

| `cv::COLORMAP_VIRIDIS` | Viridis color map |

|

||||

| `cv::COLORMAP_CIVIDIS` | Cividis color map |

|

||||

| `cv::COLORMAP_TWILIGHT` | Twilight color map |

|

||||

| `cv::COLORMAP_TWILIGHT_SHIFTED` | Shifted Twilight color map |

|

||||

| `cv::COLORMAP_TURBO` | Turbo color map |

|

||||

| `cv::COLORMAP_DEEPGREEN` | Deep Green color map |

|

||||

|

||||

These colormaps are commonly used for visualizing data with different color representations.

|

||||

206

docs/en/guides/hyperparameter-tuning.md

Normal file

206

docs/en/guides/hyperparameter-tuning.md

Normal file

@ -0,0 +1,206 @@

|

||||

---

|

||||

comments: true

|

||||

description: Dive into hyperparameter tuning in Ultralytics YOLO models. Learn how to optimize performance using the Tuner class and genetic evolution.

|

||||

keywords: Ultralytics, YOLO, Hyperparameter Tuning, Tuner Class, Genetic Evolution, Optimization

|

||||

---

|

||||

|

||||

# Ultralytics YOLO Hyperparameter Tuning Guide

|

||||

|

||||

## Introduction

|

||||

|

||||

Hyperparameter tuning is not just a one-time set-up but an iterative process aimed at optimizing the machine learning model's performance metrics, such as accuracy, precision, and recall. In the context of Ultralytics YOLO, these hyperparameters could range from learning rate to architectural details, such as the number of layers or types of activation functions used.

|

||||

|

||||

### What are Hyperparameters?

|

||||

|

||||

Hyperparameters are high-level, structural settings for the algorithm. They are set prior to the training phase and remain constant during it. Here are some commonly tuned hyperparameters in Ultralytics YOLO:

|

||||

|

||||

- **Learning Rate** `lr0`: Determines the step size at each iteration while moving towards a minimum in the loss function.

|

||||

- **Batch Size** `batch`: Number of images processed simultaneously in a forward pass.

|

||||

- **Number of Epochs** `epochs`: An epoch is one complete forward and backward pass of all the training examples.

|

||||

- **Architecture Specifics**: Such as channel counts, number of layers, types of activation functions, etc.

|

||||

|

||||

<p align="center">

|

||||

<img width="640" src="https://user-images.githubusercontent.com/26833433/263858934-4f109a2f-82d9-4d08-8bd6-6fd1ff520bcd.png" alt="Hyperparameter Tuning Visual">

|

||||

</p>

|

||||

|

||||

For a full list of augmentation hyperparameters used in YOLOv8 please refer to the [configurations page](../usage/cfg.md#augmentation-settings).

|

||||

|

||||

### Genetic Evolution and Mutation

|

||||

|

||||

Ultralytics YOLO uses genetic algorithms to optimize hyperparameters. Genetic algorithms are inspired by the mechanism of natural selection and genetics.

|

||||

|

||||

- **Mutation**: In the context of Ultralytics YOLO, mutation helps in locally searching the hyperparameter space by applying small, random changes to existing hyperparameters, producing new candidates for evaluation.

|

||||

- **Crossover**: Although crossover is a popular genetic algorithm technique, it is not currently used in Ultralytics YOLO for hyperparameter tuning. The focus is mainly on mutation for generating new hyperparameter sets.

|

||||

|

||||

## Preparing for Hyperparameter Tuning

|

||||

|

||||

Before you begin the tuning process, it's important to:

|

||||

|

||||

1. **Identify the Metrics**: Determine the metrics you will use to evaluate the model's performance. This could be AP50, F1-score, or others.

|

||||

2. **Set the Tuning Budget**: Define how much computational resources you're willing to allocate. Hyperparameter tuning can be computationally intensive.

|

||||

|

||||

## Steps Involved

|

||||

|

||||

### Initialize Hyperparameters

|

||||

|

||||

Start with a reasonable set of initial hyperparameters. This could either be the default hyperparameters set by Ultralytics YOLO or something based on your domain knowledge or previous experiments.

|

||||

|

||||

### Mutate Hyperparameters

|

||||

|

||||

Use the `_mutate` method to produce a new set of hyperparameters based on the existing set.

|

||||

|

||||

### Train Model

|

||||

|

||||

Training is performed using the mutated set of hyperparameters. The training performance is then assessed.

|

||||

|

||||

### Evaluate Model

|

||||

|

||||

Use metrics like AP50, F1-score, or custom metrics to evaluate the model's performance.

|

||||

|

||||

### Log Results

|

||||

|

||||

It's crucial to log both the performance metrics and the corresponding hyperparameters for future reference.

|

||||

|

||||

### Repeat

|

||||

|

||||

The process is repeated until either the set number of iterations is reached or the performance metric is satisfactory.

|

||||

|

||||

## Usage Example

|

||||

|

||||

Here's how to use the `model.tune()` method to utilize the `Tuner` class for hyperparameter tuning of YOLOv8n on COCO8 for 30 epochs with an AdamW optimizer and skipping plotting, checkpointing and validation other than on final epoch for faster Tuning.

|

||||

|

||||

!!! Example

|

||||

|

||||

=== "Python"

|

||||

|

||||

```python

|

||||

from ultralytics import YOLO

|

||||

|

||||

# Initialize the YOLO model

|

||||

model = YOLO('yolov8n.pt')

|

||||

|

||||

# Tune hyperparameters on COCO8 for 30 epochs

|

||||

model.tune(data='coco8.yaml', epochs=30, iterations=300, optimizer='AdamW', plots=False, save=False, val=False)

|

||||

```

|

||||

|

||||

## Results

|

||||

|

||||

After you've successfully completed the hyperparameter tuning process, you will obtain several files and directories that encapsulate the results of the tuning. The following describes each:

|

||||

|

||||

### File Structure

|

||||

|

||||

Here's what the directory structure of the results will look like. Training directories like `train1/` contain individual tuning iterations, i.e. one model trained with one set of hyperparameters. The `tune/` directory contains tuning results from all the individual model trainings:

|

||||

|

||||

```plaintext

|

||||

runs/

|

||||

└── detect/

|

||||

├── train1/

|

||||

├── train2/

|

||||

├── ...

|

||||

└── tune/

|

||||

├── best_hyperparameters.yaml

|

||||

├── best_fitness.png

|

||||

├── tune_results.csv

|

||||

├── tune_scatter_plots.png

|

||||

└── weights/

|

||||

├── last.pt

|

||||

└── best.pt

|

||||

```

|

||||

|

||||

### File Descriptions

|

||||

|

||||

#### best_hyperparameters.yaml

|

||||

|

||||

This YAML file contains the best-performing hyperparameters found during the tuning process. You can use this file to initialize future trainings with these optimized settings.

|

||||

|

||||

- **Format**: YAML

|

||||

- **Usage**: Hyperparameter results

|

||||

- **Example**:

|

||||

```yaml

|

||||

# 558/900 iterations complete ✅ (45536.81s)

|

||||

# Results saved to /usr/src/ultralytics/runs/detect/tune

|

||||

# Best fitness=0.64297 observed at iteration 498

|

||||

# Best fitness metrics are {'metrics/precision(B)': 0.87247, 'metrics/recall(B)': 0.71387, 'metrics/mAP50(B)': 0.79106, 'metrics/mAP50-95(B)': 0.62651, 'val/box_loss': 2.79884, 'val/cls_loss': 2.72386, 'val/dfl_loss': 0.68503, 'fitness': 0.64297}

|

||||

# Best fitness model is /usr/src/ultralytics/runs/detect/train498

|

||||

# Best fitness hyperparameters are printed below.

|

||||

|

||||

lr0: 0.00269

|

||||

lrf: 0.00288

|

||||

momentum: 0.73375

|

||||

weight_decay: 0.00015

|

||||

warmup_epochs: 1.22935

|

||||

warmup_momentum: 0.1525

|

||||

box: 18.27875

|

||||

cls: 1.32899

|

||||

dfl: 0.56016

|

||||

hsv_h: 0.01148

|

||||

hsv_s: 0.53554

|

||||

hsv_v: 0.13636

|

||||

degrees: 0.0

|

||||

translate: 0.12431

|

||||

scale: 0.07643

|

||||

shear: 0.0

|

||||

perspective: 0.0

|

||||

flipud: 0.0

|

||||

fliplr: 0.08631

|

||||

mosaic: 0.42551

|

||||

mixup: 0.0

|

||||

copy_paste: 0.0

|

||||

```

|

||||

|

||||

#### best_fitness.png

|

||||

|

||||

This is a plot displaying fitness (typically a performance metric like AP50) against the number of iterations. It helps you visualize how well the genetic algorithm performed over time.

|

||||

|

||||

- **Format**: PNG

|

||||

- **Usage**: Performance visualization

|

||||

|

||||

<p align="center">

|

||||

<img width="640" src="https://user-images.githubusercontent.com/26833433/266847423-9d0aea13-d5c4-4771-b06e-0b817a498260.png" alt="Hyperparameter Tuning Fitness vs Iteration">

|

||||

</p>

|

||||

|

||||

#### tune_results.csv

|

||||

|

||||

A CSV file containing detailed results of each iteration during the tuning. Each row in the file represents one iteration, and it includes metrics like fitness score, precision, recall, as well as the hyperparameters used.

|

||||

|

||||

- **Format**: CSV

|

||||

- **Usage**: Per-iteration results tracking.

|

||||

- **Example**:

|

||||

```csv

|

||||

fitness,lr0,lrf,momentum,weight_decay,warmup_epochs,warmup_momentum,box,cls,dfl,hsv_h,hsv_s,hsv_v,degrees,translate,scale,shear,perspective,flipud,fliplr,mosaic,mixup,copy_paste

|

||||

0.05021,0.01,0.01,0.937,0.0005,3.0,0.8,7.5,0.5,1.5,0.015,0.7,0.4,0.0,0.1,0.5,0.0,0.0,0.0,0.5,1.0,0.0,0.0

|

||||

0.07217,0.01003,0.00967,0.93897,0.00049,2.79757,0.81075,7.5,0.50746,1.44826,0.01503,0.72948,0.40658,0.0,0.0987,0.4922,0.0,0.0,0.0,0.49729,1.0,0.0,0.0

|

||||

0.06584,0.01003,0.00855,0.91009,0.00073,3.42176,0.95,8.64301,0.54594,1.72261,0.01503,0.59179,0.40658,0.0,0.0987,0.46955,0.0,0.0,0.0,0.49729,0.80187,0.0,0.0

|

||||

```

|

||||

|

||||

#### tune_scatter_plots.png

|

||||

|

||||

This file contains scatter plots generated from `tune_results.csv`, helping you visualize relationships between different hyperparameters and performance metrics. Note that hyperparameters initialized to 0 will not be tuned, such as `degrees` and `shear` below.

|

||||

|

||||

- **Format**: PNG

|

||||

- **Usage**: Exploratory data analysis

|

||||

|

||||

<p align="center">

|

||||

<img width="1000" src="https://user-images.githubusercontent.com/26833433/266847488-ec382f3d-79bc-4087-a0e0-42fb8b62cad2.png" alt="Hyperparameter Tuning Scatter Plots">

|

||||

</p>

|

||||

|

||||

#### weights/

|

||||

|

||||

This directory contains the saved PyTorch models for the last and the best iterations during the hyperparameter tuning process.

|

||||

|

||||

- **`last.pt`**: The last.pt are the weights from the last epoch of training.

|

||||

- **`best.pt`**: The best.pt weights for the iteration that achieved the best fitness score.

|

||||

|

||||

Using these results, you can make more informed decisions for your future model trainings and analyses. Feel free to consult these artifacts to understand how well your model performed and how you might improve it further.

|

||||

|

||||

## Conclusion

|

||||

|

||||

The hyperparameter tuning process in Ultralytics YOLO is simplified yet powerful, thanks to its genetic algorithm-based approach focused on mutation. Following the steps outlined in this guide will assist you in systematically tuning your model to achieve better performance.

|

||||

|

||||

### Further Reading

|

||||

|

||||

1. [Hyperparameter Optimization in Wikipedia](https://en.wikipedia.org/wiki/Hyperparameter_optimization)

|

||||

2. [YOLOv5 Hyperparameter Evolution Guide](../yolov5/tutorials/hyperparameter_evolution.md)

|

||||

3. [Efficient Hyperparameter Tuning with Ray Tune and YOLOv8](../integrations/ray-tune.md)

|

||||

|

||||

For deeper insights, you can explore the `Tuner` class source code and accompanying documentation. Should you have any questions, feature requests, or need further assistance, feel free to reach out to us on [GitHub](https://github.com/ultralytics/ultralytics/issues/new/choose) or [Discord](https://ultralytics.com/discord).

|

||||

65

docs/en/guides/index.md

Normal file

65

docs/en/guides/index.md

Normal file

@ -0,0 +1,65 @@

|

||||

---

|

||||

comments: true

|

||||

description: In-depth exploration of Ultralytics' YOLO. Learn about the YOLO object detection model, how to train it on custom data, multi-GPU training, exporting, predicting, deploying, and more.

|

||||

keywords: Ultralytics, YOLO, Deep Learning, Object detection, PyTorch, Tutorial, Multi-GPU training, Custom data training, SAHI, Tiled Inference

|

||||

---

|

||||

|

||||

# Comprehensive Tutorials to Ultralytics YOLO

|

||||

|

||||

Welcome to the Ultralytics' YOLO 🚀 Guides! Our comprehensive tutorials cover various aspects of the YOLO object detection model, ranging from training and prediction to deployment. Built on PyTorch, YOLO stands out for its exceptional speed and accuracy in real-time object detection tasks.

|

||||

|

||||

Whether you're a beginner or an expert in deep learning, our tutorials offer valuable insights into the implementation and optimization of YOLO for your computer vision projects. Let's dive in!

|

||||

|

||||

<p align="center">

|

||||

<br>

|

||||

<iframe loading="lazy" width="720" height="405" src="https://www.youtube.com/embed/96NkhsV-W1U"

|

||||

title="YouTube video player" frameborder="0"

|

||||

allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share"

|

||||

allowfullscreen>

|

||||

</iframe>

|

||||

<br>

|

||||

<strong>Watch:</strong> Ultralytics YOLOv8 Guides Overview

|

||||

</p>

|

||||

|

||||

## Guides

|

||||

|

||||

Here's a compilation of in-depth guides to help you master different aspects of Ultralytics YOLO.

|

||||

|

||||

- [YOLO Common Issues](yolo-common-issues.md) ⭐ RECOMMENDED: Practical solutions and troubleshooting tips to the most frequently encountered issues when working with Ultralytics YOLO models.

|

||||